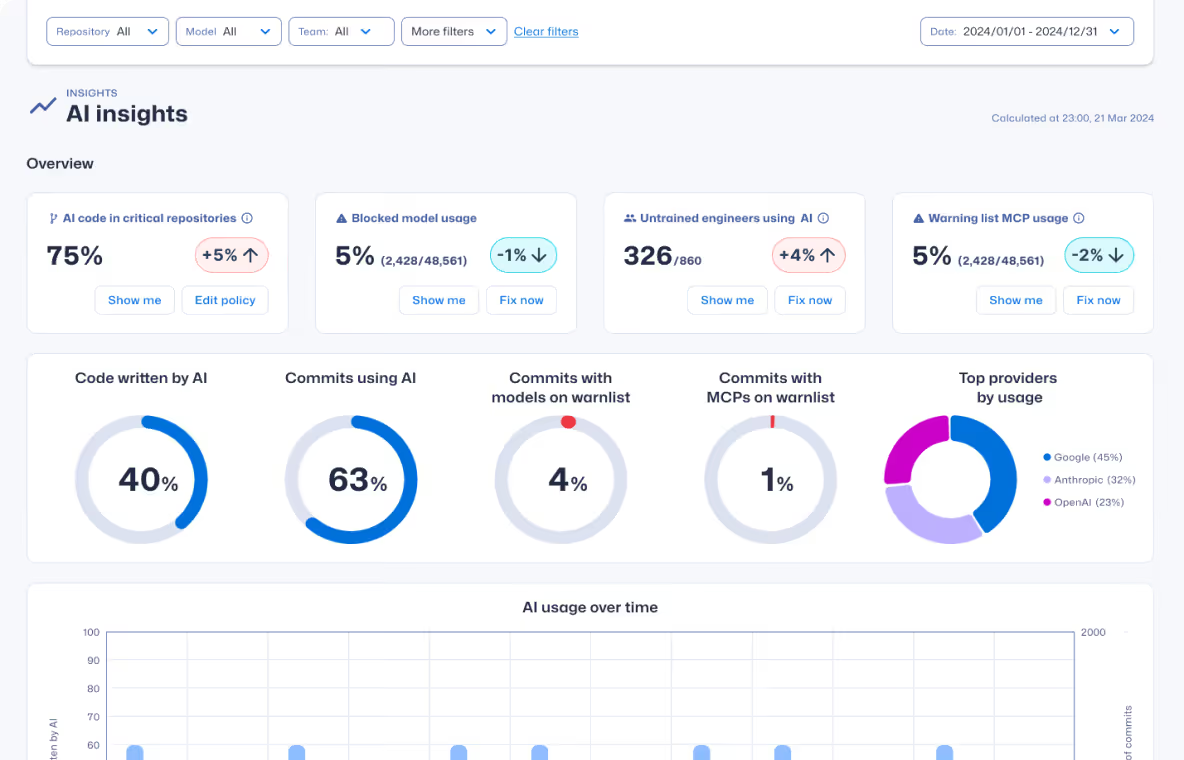

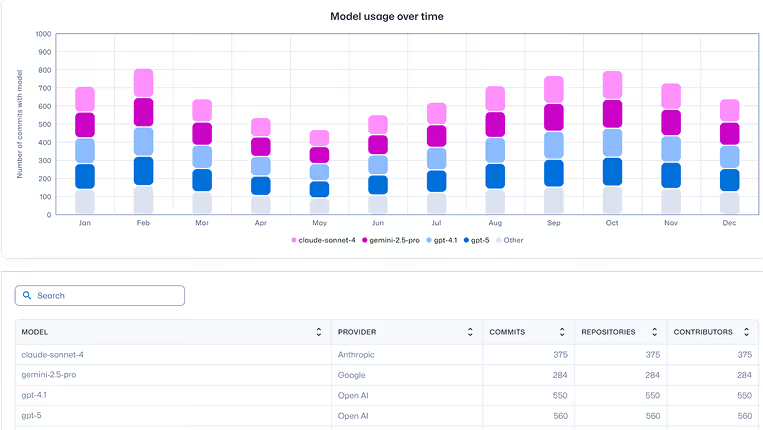

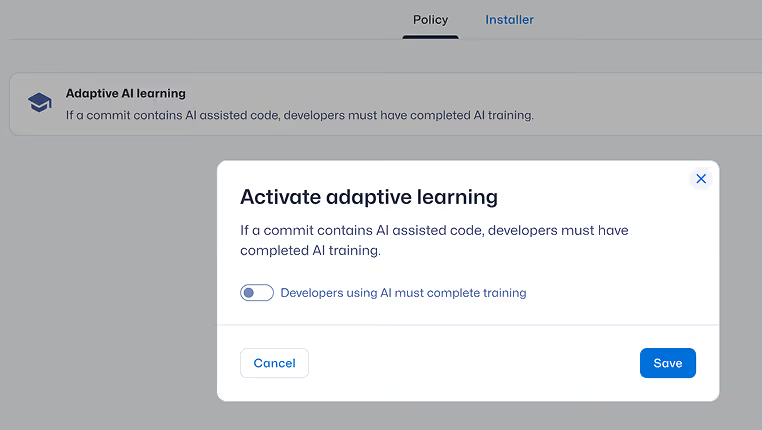

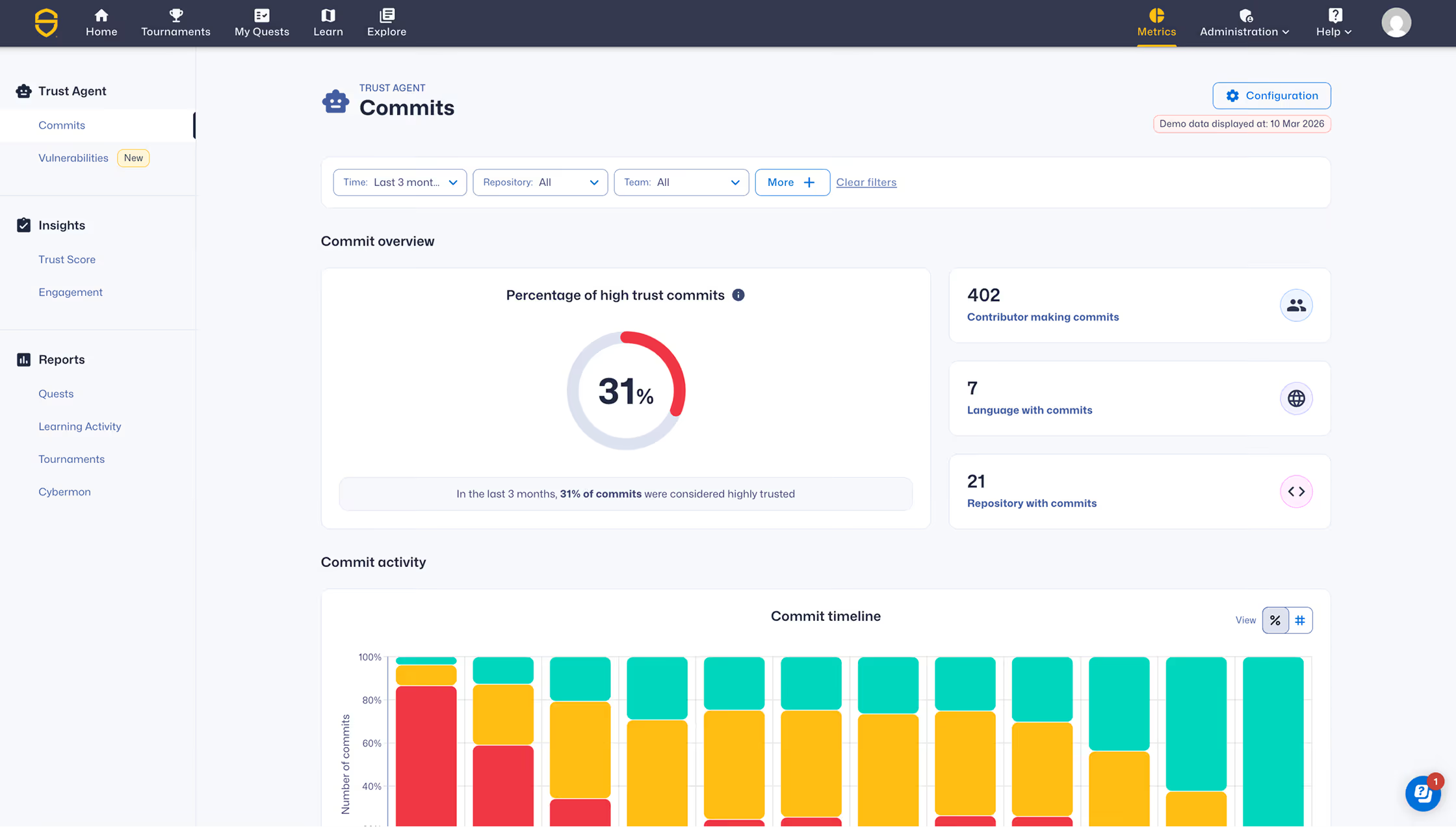

Trust Agent: AI captures AI usage signals and commit metadata — not source code or prompts — preserving developer privacy while enabling governance at scale.

It makes AI-assisted development visible, auditable, and manageable across the secure SDLC, helping organizations identify and reduce developer risk before code reaches production.