AI has expanded your software supply chain

AI coding assistants, LLMs, and MCP-connected agents now generate production code across the SDLC. Development velocity has accelerated — but governance has not kept pace. AI has become an ungoverned contributor to your software supply chain.

Most organizations cannot clearly answer:

- Which AI models generated specific commits

- Whether those models consistently produce secure code

- Which MCP servers are active and what they access

- Whether AI-assisted commits meet secure coding standards

- How AI usage impacts overall software risk

Without structured AI software governance, organizations face fragmented ownership, limited visibility, and growing exposure.

AI-assisted development increases code velocity — but without enforceable oversight, it also increases introduced vulnerability risk and model supply chain exposure.

Oversight over AI-driven development

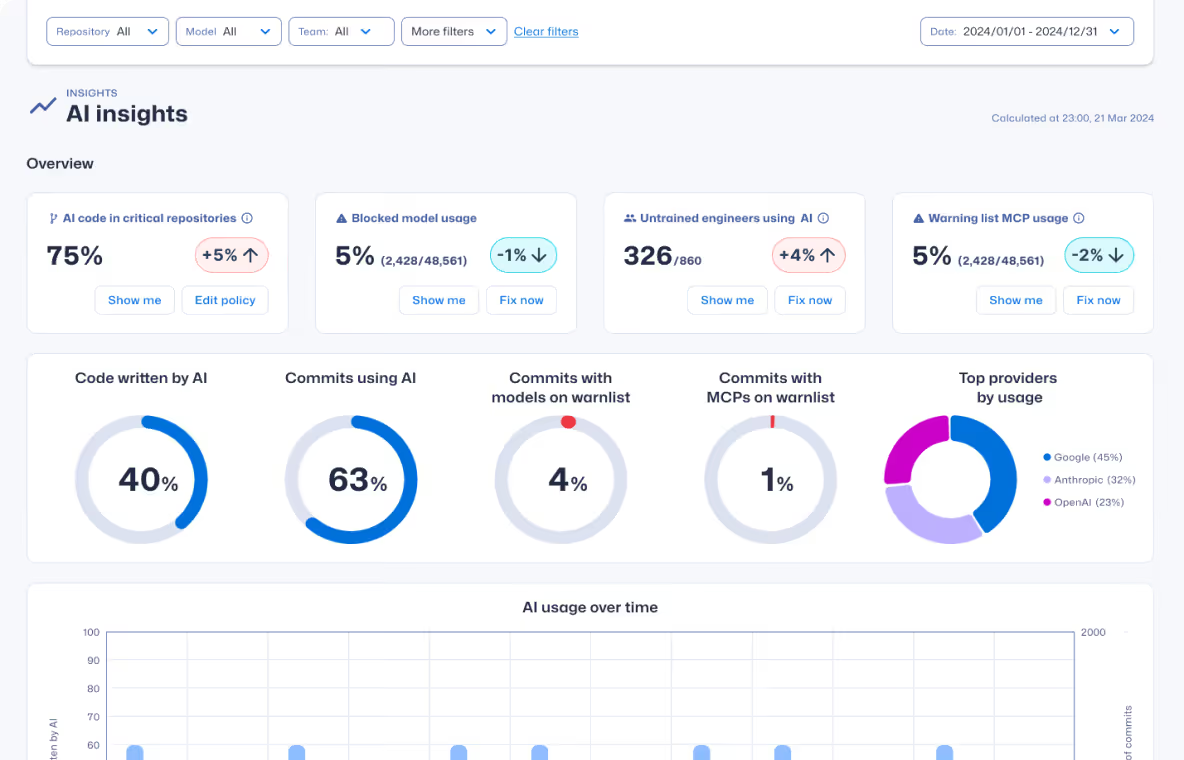

AI software governance makes AI-generated code visible, correlates commit-level risk, and aligns AI-driven development with security policy. It connects AI usage visibility, risk intelligence, and developer capability insights across the software development lifecycle.

It enables organizations to:

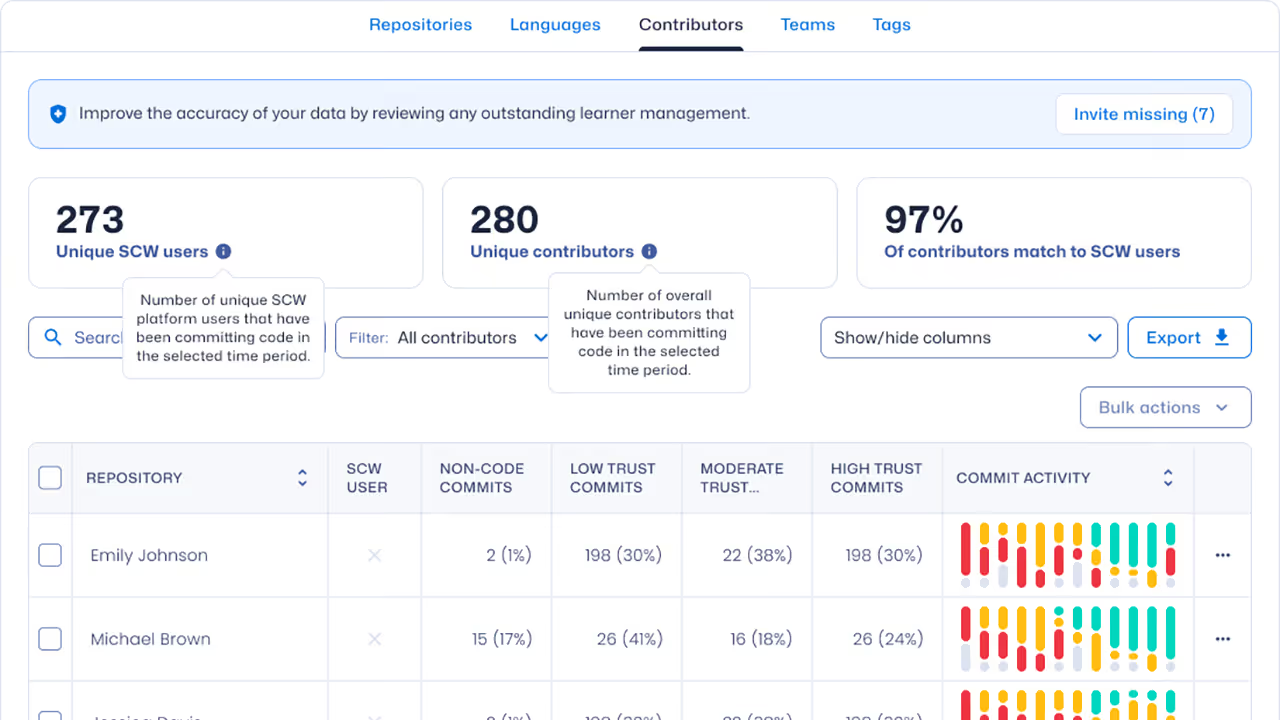

- Gain visibility into where and how AI is used to generate code

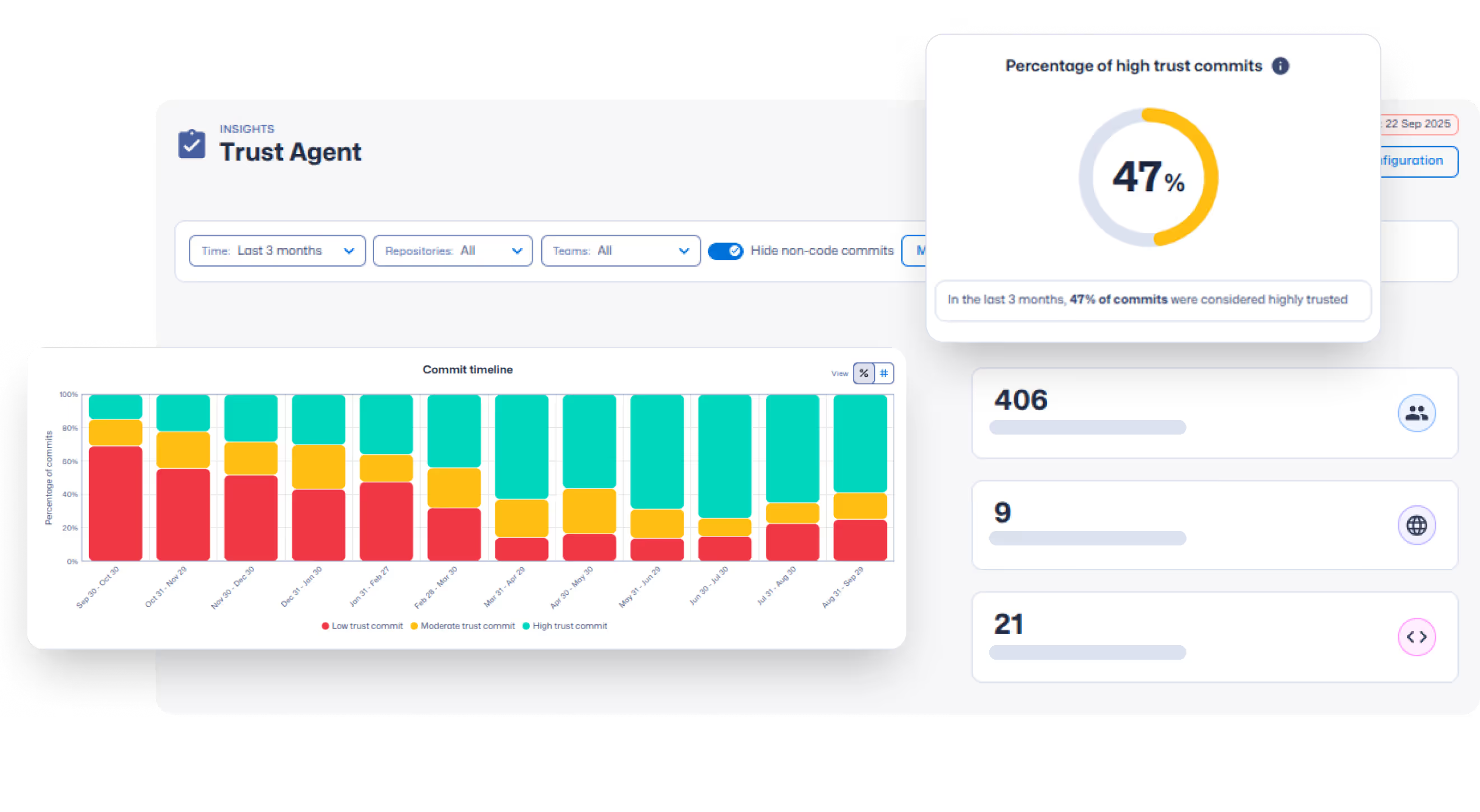

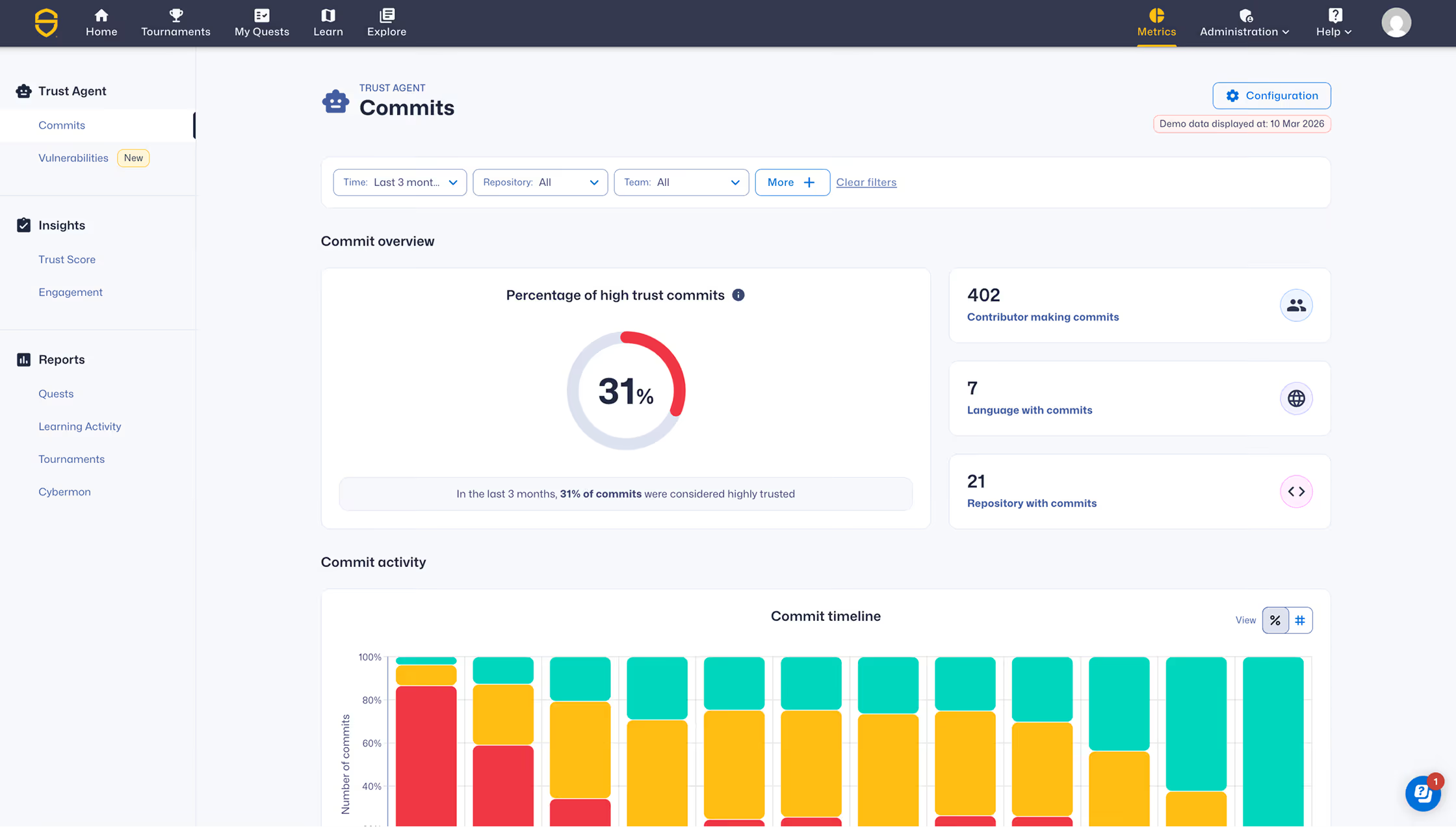

- Correlate AI-assisted commits with software risk

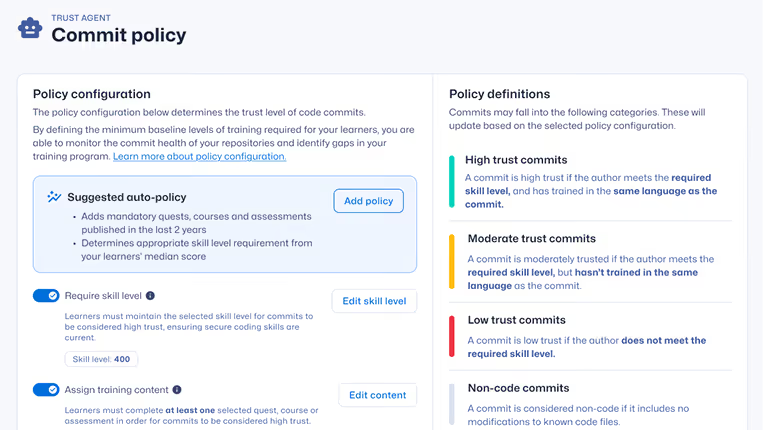

- Define AI usage policy and governance standards

- Create accountability across human and AI-generated code

Govern and securely scale AI-driven software development

Traditional application security tools detect vulnerabilities after code is written. AI software governance provides visibility into AI model usage, correlates risk signals at commit, and helps organizations align development with secure coding policies.

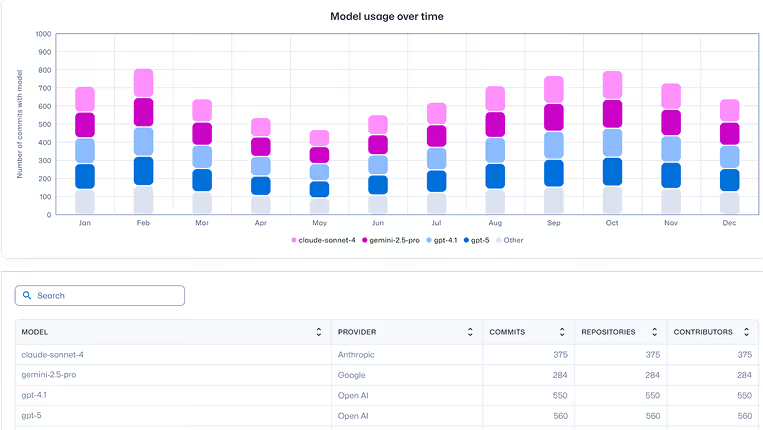

AI tool & model traceability

Gain visibility into which AI tools contribute code — creating a verifiable AI SBOM.

Shadow AI detection

Identify unsanctioned AI tools operating outside approved governance policies.

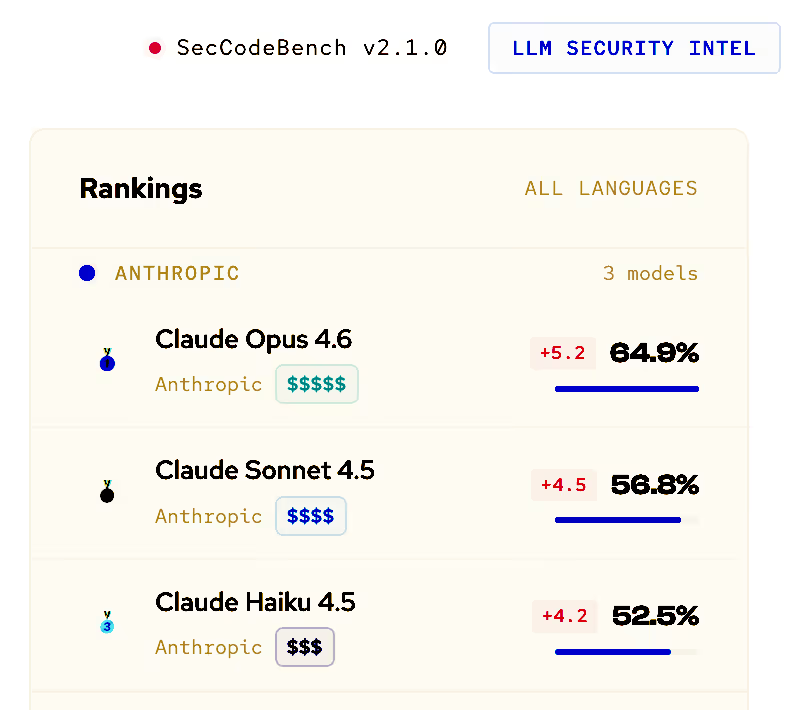

LLM security benchmarking

Get real world AI performance metrics to guide approved model usage.

Risk scoring

Correlate AI-assisted commits with risk signals and trigger targeted learning to reduce vulnerabilities.

MCP server visibility

Identify Model Context Protocol servers and understand how AI agents interact with internal systems.

Developer discovery

Continuously identify developers and commit patterns to strengthen accountability and risk visibility.

Govern AI-assisted development in four steps

Connect & observe

Integrate with repositories and CI pipelines to monitor commit metadata, AI model usage, and contributor activity.

Benchmark & score

Evaluate AI-assisted commits against vulnerability benchmarks and developer Trust Score® metrics.

Purpose-built for AI governance teams

Designed for the leaders responsible for securing software development as AI becomes a core contributor to production code.

Govern AI-driven development

before it ships

See where AI tools generate code, correlate commits with risk signals, and maintain visibility across your AI software supply chain.

Control, measure, and secure AI-assisted software development

Learn how Secure Code Warrior provides AI observability, policy enforcement, and governance across AI-assisted development workflows.