SCW Trust Agent: AI - Visibility and Governance for Your AI-Assisted SDLC

The widespread adoption of AI coding tools is transforming software development. With 78% of developers1 now using AI to increase productivity, the speed of innovation has never been greater. But this rapid acceleration comes with a critical risk.

Studies reveal that as much as 50% of functionally correct, AI-generated code is insecure2. This isn’t a small bug; it’s a systemic challenge. It means every time a developer uses a tool like GitHub Copilot or ChatGPT, they could be unknowingly introducing new vulnerabilities into your codebase. The result is a dangerous mix of speed and security risks that most organizations are not equipped to manage.

The Challenge of “Shadow AI”

Without a way to manage AI coding tool usage, CISO, AppSec, and engineering leaders are exposed to new risks they can't see or measure. How can you answer crucial questions like:

- What percentage of our code is AI-generated?

- What MCPs are being used?

- What unapproved models are being used?

- What vulnerabilities are being generated by different models?

The lack of visibility and governance creates a new layer of risk and uncertainty. It’s the very definition of “shadow IT,” but for your codebase.

Our Solution – Trust Agent: AI

We believe you can have both speed and security. We're proud to launch Trust Agent: AI, a powerful new capability of our Trust Agent product that provides the deep observability and control you need to confidently embrace AI in your software development lifecycle. Using a unique combination of signals, Trust Agent: AI provides:

- Visibility: See which developers are using which AI coding tools, LLMs and MCPs, and on what codebases. No more "shadow AI."

- Risk Metrics: Connect AI-generated code to a developer's skill level and introduced vulnerabilities to understand the true risk being introduced, at the commit level.

- Governance: Automate policy enforcement to ensure AI-enabled developers meet secure coding standards.

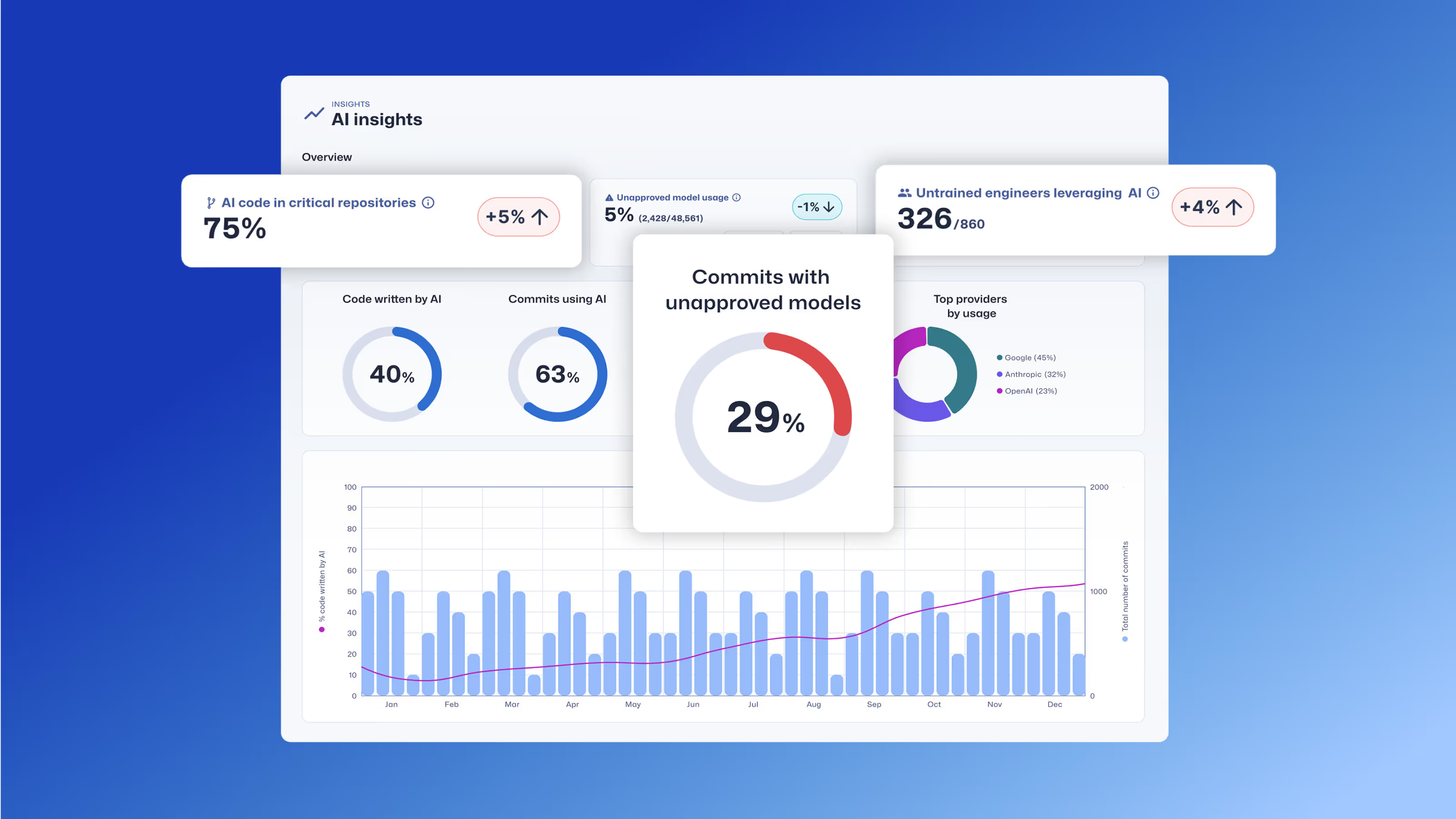

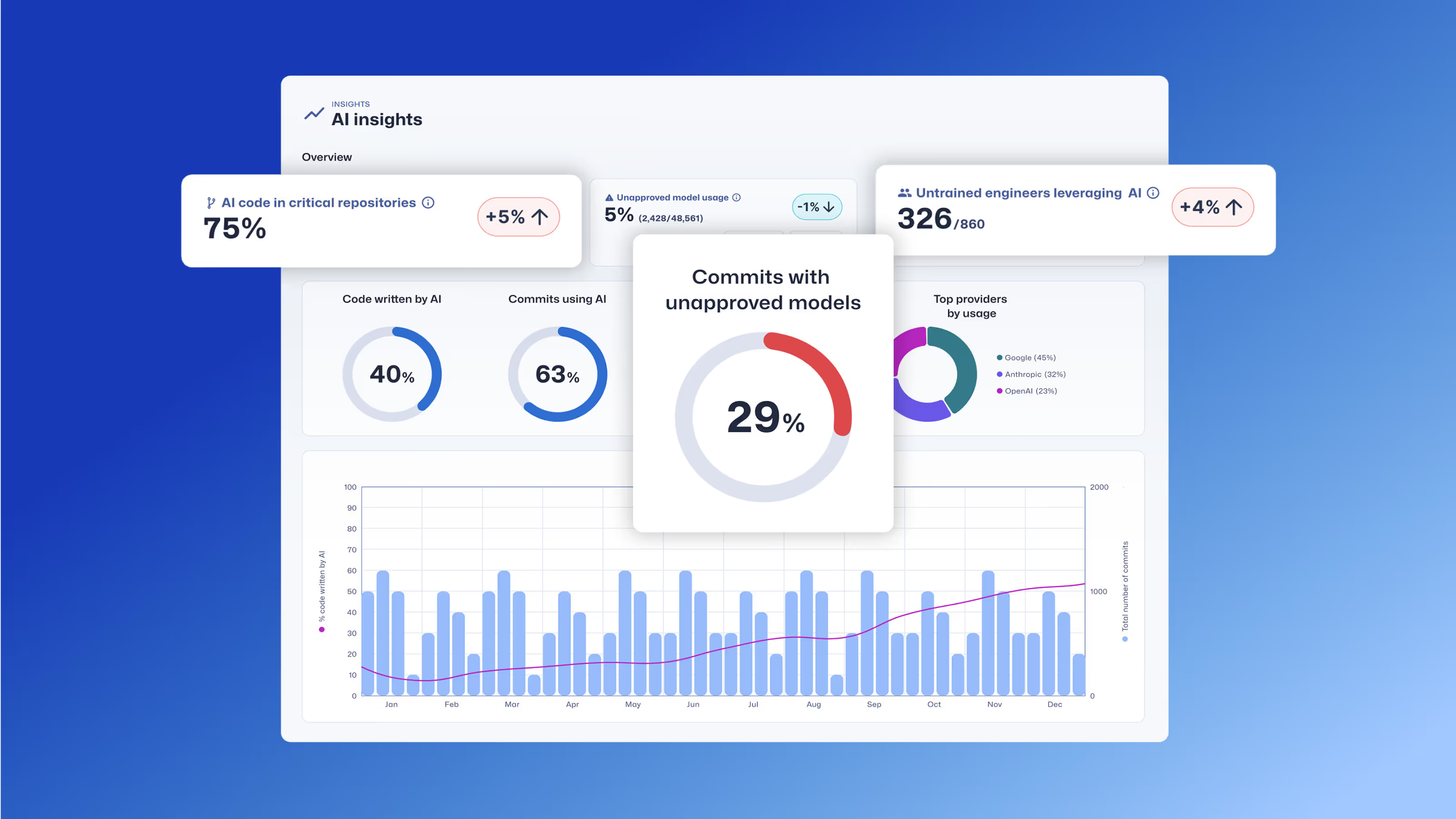

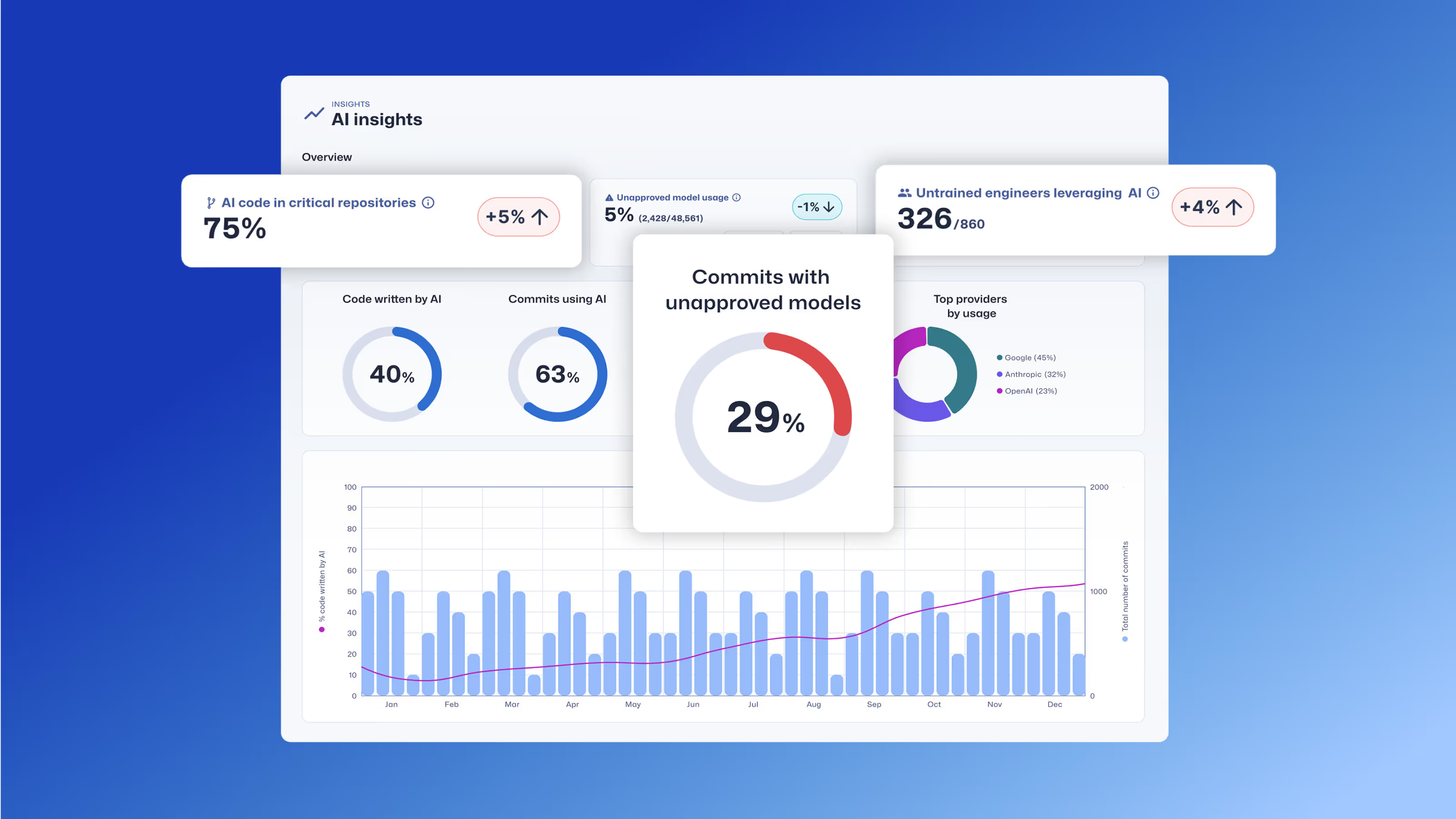

The Trust Agent: AI dashboard provides insights into AI coding tool usage, contributing developer, code repository, and more.

The Developer's Role: The Last Line of Defense

While Trust Agent: AI gives you unprecedented governance, we know that the developer remains the last, and most critical, line of defense. The most effective way to manage AI-generated code risk is to ensure your developers have the security skills to review, validate, and secure that code. This is where SCW Learning, part of our comprehensive Developer Risk Management platform, comes in. With SCW Learning, we equip your developers with the hands-on skills they need to safely leverage AI for productivity. The SCW Learning product includes:

- SCW Trust Score: An industry-first benchmark that quantifies developer security proficiency, enabling you to identify which developers are best equipped to handle AI-generated code.

- AI Challenges: Interactive, real-world coding challenges that specifically teach developers how to find and fix vulnerabilities in AI-generated code.

- Targeted Learning: Curated learning paths from 200+ AI Challenges, Guidelines, Walkthroughs, Missions, Quests, and Courses that reinforce secure coding principles and help developers master the security skills needed to mitigate AI risks.

By combining the powerful governance of Trust Agent: AI with the skill development of our learning product, you can create a truly secure SDLC. You’ll be able to identify risk, enforce policy, and empower your developers to build code faster and more securely than ever before.

Ready to Secure Your AI Journey?

The era of AI is here, and as a product manager, my goal is to build solutions that don't just solve today's problems but anticipate tomorrow's. Trust Agent: AI is designed to do just that, giving you the visibility and control to manage AI risks while empowering your teams to innovate. The early access beta is now live, and we'd love for you to be a part of it. This isn't just about a new product; it's about pioneering a new standard for secure software development in an AI-first world.

Join the Early Access Waitlist today!

Learn how Trust Agent: AI provides deep visibility and governance over AI-generated code, empowering organizations to innovate faster and more securely.

Secure Code Warrior ist für Ihr Unternehmen da, um Ihnen zu helfen, Code während des gesamten Softwareentwicklungszyklus zu sichern und eine Kultur zu schaffen, in der Cybersicherheit an erster Stelle steht. Ganz gleich, ob Sie AppSec-Manager, Entwickler, CISO oder jemand anderes sind, der sich mit Sicherheit befasst, wir können Ihrem Unternehmen helfen, die mit unsicherem Code verbundenen Risiken zu reduzieren.

Eine Demo buchenTamim Noorzad, Direktor für Produktmanagement bei Secure Code Warrior, ist ein Ingenieur, der zum Produktmanager wurde. Er verfügt über mehr als 17 Jahre Erfahrung und ist auf SaaS-0-zu-1-Produkte spezialisiert.

The widespread adoption of AI coding tools is transforming software development. With 78% of developers1 now using AI to increase productivity, the speed of innovation has never been greater. But this rapid acceleration comes with a critical risk.

Studies reveal that as much as 50% of functionally correct, AI-generated code is insecure2. This isn’t a small bug; it’s a systemic challenge. It means every time a developer uses a tool like GitHub Copilot or ChatGPT, they could be unknowingly introducing new vulnerabilities into your codebase. The result is a dangerous mix of speed and security risks that most organizations are not equipped to manage.

The Challenge of “Shadow AI”

Without a way to manage AI coding tool usage, CISO, AppSec, and engineering leaders are exposed to new risks they can't see or measure. How can you answer crucial questions like:

- What percentage of our code is AI-generated?

- What MCPs are being used?

- What unapproved models are being used?

- What vulnerabilities are being generated by different models?

The lack of visibility and governance creates a new layer of risk and uncertainty. It’s the very definition of “shadow IT,” but for your codebase.

Our Solution – Trust Agent: AI

We believe you can have both speed and security. We're proud to launch Trust Agent: AI, a powerful new capability of our Trust Agent product that provides the deep observability and control you need to confidently embrace AI in your software development lifecycle. Using a unique combination of signals, Trust Agent: AI provides:

- Visibility: See which developers are using which AI coding tools, LLMs and MCPs, and on what codebases. No more "shadow AI."

- Risk Metrics: Connect AI-generated code to a developer's skill level and introduced vulnerabilities to understand the true risk being introduced, at the commit level.

- Governance: Automate policy enforcement to ensure AI-enabled developers meet secure coding standards.

The Trust Agent: AI dashboard provides insights into AI coding tool usage, contributing developer, code repository, and more.

The Developer's Role: The Last Line of Defense

While Trust Agent: AI gives you unprecedented governance, we know that the developer remains the last, and most critical, line of defense. The most effective way to manage AI-generated code risk is to ensure your developers have the security skills to review, validate, and secure that code. This is where SCW Learning, part of our comprehensive Developer Risk Management platform, comes in. With SCW Learning, we equip your developers with the hands-on skills they need to safely leverage AI for productivity. The SCW Learning product includes:

- SCW Trust Score: An industry-first benchmark that quantifies developer security proficiency, enabling you to identify which developers are best equipped to handle AI-generated code.

- AI Challenges: Interactive, real-world coding challenges that specifically teach developers how to find and fix vulnerabilities in AI-generated code.

- Targeted Learning: Curated learning paths from 200+ AI Challenges, Guidelines, Walkthroughs, Missions, Quests, and Courses that reinforce secure coding principles and help developers master the security skills needed to mitigate AI risks.

By combining the powerful governance of Trust Agent: AI with the skill development of our learning product, you can create a truly secure SDLC. You’ll be able to identify risk, enforce policy, and empower your developers to build code faster and more securely than ever before.

Ready to Secure Your AI Journey?

The era of AI is here, and as a product manager, my goal is to build solutions that don't just solve today's problems but anticipate tomorrow's. Trust Agent: AI is designed to do just that, giving you the visibility and control to manage AI risks while empowering your teams to innovate. The early access beta is now live, and we'd love for you to be a part of it. This isn't just about a new product; it's about pioneering a new standard for secure software development in an AI-first world.

Join the Early Access Waitlist today!

The widespread adoption of AI coding tools is transforming software development. With 78% of developers1 now using AI to increase productivity, the speed of innovation has never been greater. But this rapid acceleration comes with a critical risk.

Studies reveal that as much as 50% of functionally correct, AI-generated code is insecure2. This isn’t a small bug; it’s a systemic challenge. It means every time a developer uses a tool like GitHub Copilot or ChatGPT, they could be unknowingly introducing new vulnerabilities into your codebase. The result is a dangerous mix of speed and security risks that most organizations are not equipped to manage.

The Challenge of “Shadow AI”

Without a way to manage AI coding tool usage, CISO, AppSec, and engineering leaders are exposed to new risks they can't see or measure. How can you answer crucial questions like:

- What percentage of our code is AI-generated?

- What MCPs are being used?

- What unapproved models are being used?

- What vulnerabilities are being generated by different models?

The lack of visibility and governance creates a new layer of risk and uncertainty. It’s the very definition of “shadow IT,” but for your codebase.

Our Solution – Trust Agent: AI

We believe you can have both speed and security. We're proud to launch Trust Agent: AI, a powerful new capability of our Trust Agent product that provides the deep observability and control you need to confidently embrace AI in your software development lifecycle. Using a unique combination of signals, Trust Agent: AI provides:

- Visibility: See which developers are using which AI coding tools, LLMs and MCPs, and on what codebases. No more "shadow AI."

- Risk Metrics: Connect AI-generated code to a developer's skill level and introduced vulnerabilities to understand the true risk being introduced, at the commit level.

- Governance: Automate policy enforcement to ensure AI-enabled developers meet secure coding standards.

The Trust Agent: AI dashboard provides insights into AI coding tool usage, contributing developer, code repository, and more.

The Developer's Role: The Last Line of Defense

While Trust Agent: AI gives you unprecedented governance, we know that the developer remains the last, and most critical, line of defense. The most effective way to manage AI-generated code risk is to ensure your developers have the security skills to review, validate, and secure that code. This is where SCW Learning, part of our comprehensive Developer Risk Management platform, comes in. With SCW Learning, we equip your developers with the hands-on skills they need to safely leverage AI for productivity. The SCW Learning product includes:

- SCW Trust Score: An industry-first benchmark that quantifies developer security proficiency, enabling you to identify which developers are best equipped to handle AI-generated code.

- AI Challenges: Interactive, real-world coding challenges that specifically teach developers how to find and fix vulnerabilities in AI-generated code.

- Targeted Learning: Curated learning paths from 200+ AI Challenges, Guidelines, Walkthroughs, Missions, Quests, and Courses that reinforce secure coding principles and help developers master the security skills needed to mitigate AI risks.

By combining the powerful governance of Trust Agent: AI with the skill development of our learning product, you can create a truly secure SDLC. You’ll be able to identify risk, enforce policy, and empower your developers to build code faster and more securely than ever before.

Ready to Secure Your AI Journey?

The era of AI is here, and as a product manager, my goal is to build solutions that don't just solve today's problems but anticipate tomorrow's. Trust Agent: AI is designed to do just that, giving you the visibility and control to manage AI risks while empowering your teams to innovate. The early access beta is now live, and we'd love for you to be a part of it. This isn't just about a new product; it's about pioneering a new standard for secure software development in an AI-first world.

Join the Early Access Waitlist today!

Klicken Sie auf den Link unten und laden Sie das PDF dieser Ressource herunter.

Secure Code Warrior ist für Ihr Unternehmen da, um Ihnen zu helfen, Code während des gesamten Softwareentwicklungszyklus zu sichern und eine Kultur zu schaffen, in der Cybersicherheit an erster Stelle steht. Ganz gleich, ob Sie AppSec-Manager, Entwickler, CISO oder jemand anderes sind, der sich mit Sicherheit befasst, wir können Ihrem Unternehmen helfen, die mit unsicherem Code verbundenen Risiken zu reduzieren.

Bericht ansehenEine Demo buchenTamim Noorzad, Direktor für Produktmanagement bei Secure Code Warrior, ist ein Ingenieur, der zum Produktmanager wurde. Er verfügt über mehr als 17 Jahre Erfahrung und ist auf SaaS-0-zu-1-Produkte spezialisiert.

The widespread adoption of AI coding tools is transforming software development. With 78% of developers1 now using AI to increase productivity, the speed of innovation has never been greater. But this rapid acceleration comes with a critical risk.

Studies reveal that as much as 50% of functionally correct, AI-generated code is insecure2. This isn’t a small bug; it’s a systemic challenge. It means every time a developer uses a tool like GitHub Copilot or ChatGPT, they could be unknowingly introducing new vulnerabilities into your codebase. The result is a dangerous mix of speed and security risks that most organizations are not equipped to manage.

The Challenge of “Shadow AI”

Without a way to manage AI coding tool usage, CISO, AppSec, and engineering leaders are exposed to new risks they can't see or measure. How can you answer crucial questions like:

- What percentage of our code is AI-generated?

- What MCPs are being used?

- What unapproved models are being used?

- What vulnerabilities are being generated by different models?

The lack of visibility and governance creates a new layer of risk and uncertainty. It’s the very definition of “shadow IT,” but for your codebase.

Our Solution – Trust Agent: AI

We believe you can have both speed and security. We're proud to launch Trust Agent: AI, a powerful new capability of our Trust Agent product that provides the deep observability and control you need to confidently embrace AI in your software development lifecycle. Using a unique combination of signals, Trust Agent: AI provides:

- Visibility: See which developers are using which AI coding tools, LLMs and MCPs, and on what codebases. No more "shadow AI."

- Risk Metrics: Connect AI-generated code to a developer's skill level and introduced vulnerabilities to understand the true risk being introduced, at the commit level.

- Governance: Automate policy enforcement to ensure AI-enabled developers meet secure coding standards.

The Trust Agent: AI dashboard provides insights into AI coding tool usage, contributing developer, code repository, and more.

The Developer's Role: The Last Line of Defense

While Trust Agent: AI gives you unprecedented governance, we know that the developer remains the last, and most critical, line of defense. The most effective way to manage AI-generated code risk is to ensure your developers have the security skills to review, validate, and secure that code. This is where SCW Learning, part of our comprehensive Developer Risk Management platform, comes in. With SCW Learning, we equip your developers with the hands-on skills they need to safely leverage AI for productivity. The SCW Learning product includes:

- SCW Trust Score: An industry-first benchmark that quantifies developer security proficiency, enabling you to identify which developers are best equipped to handle AI-generated code.

- AI Challenges: Interactive, real-world coding challenges that specifically teach developers how to find and fix vulnerabilities in AI-generated code.

- Targeted Learning: Curated learning paths from 200+ AI Challenges, Guidelines, Walkthroughs, Missions, Quests, and Courses that reinforce secure coding principles and help developers master the security skills needed to mitigate AI risks.

By combining the powerful governance of Trust Agent: AI with the skill development of our learning product, you can create a truly secure SDLC. You’ll be able to identify risk, enforce policy, and empower your developers to build code faster and more securely than ever before.

Ready to Secure Your AI Journey?

The era of AI is here, and as a product manager, my goal is to build solutions that don't just solve today's problems but anticipate tomorrow's. Trust Agent: AI is designed to do just that, giving you the visibility and control to manage AI risks while empowering your teams to innovate. The early access beta is now live, and we'd love for you to be a part of it. This isn't just about a new product; it's about pioneering a new standard for secure software development in an AI-first world.

Join the Early Access Waitlist today!

Inhaltsverzeichniss

Secure Code Warrior ist für Ihr Unternehmen da, um Ihnen zu helfen, Code während des gesamten Softwareentwicklungszyklus zu sichern und eine Kultur zu schaffen, in der Cybersicherheit an erster Stelle steht. Ganz gleich, ob Sie AppSec-Manager, Entwickler, CISO oder jemand anderes sind, der sich mit Sicherheit befasst, wir können Ihrem Unternehmen helfen, die mit unsicherem Code verbundenen Risiken zu reduzieren.

Eine Demo buchenHerunterladenRessourcen für den Einstieg

Themen und Inhalte der Securecode-Schulung

Unsere branchenführenden Inhalte werden ständig weiterentwickelt, um der sich ständig ändernden Softwareentwicklungslandschaft unter Berücksichtigung Ihrer Rolle gerecht zu werden. Themen, die alles von KI bis XQuery Injection abdecken und für eine Vielzahl von Rollen angeboten werden, von Architekten und Ingenieuren bis hin zu Produktmanagern und QA. Verschaffen Sie sich einen kleinen Einblick in das Angebot unseres Inhaltskatalogs nach Themen und Rollen.

Threat Modeling with AI: Turning Every Developer into a Threat Modeler

Walk away better equipped to help developers combine threat modeling ideas and techniques with the AI tools they're already using to strengthen security, improve collaboration, and build more resilient software from the start.

Ressourcen für den Einstieg

Cybermon is back: Beat the Boss KI-Missionen jetzt auf Abruf verfügbar

Cybermon 2025 Beat the Boss ist jetzt das ganze Jahr über in SCW verfügbar. Setzt fortschrittliche KI/LLM-Sicherheitsanforderungen ein, um die sichere KI-Entwicklung in einem großen Maßstab zu stärken.

Cyber-Resilienz-Gesetz erklärt: Was das für die Entwicklung von Secure by Design-Software bedeutet

Erfahren Sie, was der EU Cyber Resilience Act (CRA) verlangt, für wen er gilt und wie sich Entwicklungsteams mit sicheren Methoden, der Vorbeugung von Sicherheitslücken und dem Aufbau von Fähigkeiten für Entwickler darauf vorbereiten können.

.avif)

%20(1).avif)

.avif)