SCW Trust Agent: AI - Visibility and Governance for Your AI-Assisted SDLC

The widespread adoption of AI coding tools is transforming software development. With 78% of developers1 now using AI to increase productivity, the speed of innovation has never been greater. But this rapid acceleration comes with a critical risk.

Studies reveal that as much as 50% of functionally correct, AI-generated code is insecure2. This isn’t a small bug; it’s a systemic challenge. It means every time a developer uses a tool like GitHub Copilot or ChatGPT, they could be unknowingly introducing new vulnerabilities into your codebase. The result is a dangerous mix of speed and security risks that most organizations are not equipped to manage.

The Challenge of “Shadow AI”

Without a way to manage AI coding tool usage, CISO, AppSec, and engineering leaders are exposed to new risks they can't see or measure. How can you answer crucial questions like:

- What percentage of our code is AI-generated?

- What MCPs are being used?

- What unapproved models are being used?

- What vulnerabilities are being generated by different models?

The lack of visibility and governance creates a new layer of risk and uncertainty. It’s the very definition of “shadow IT,” but for your codebase.

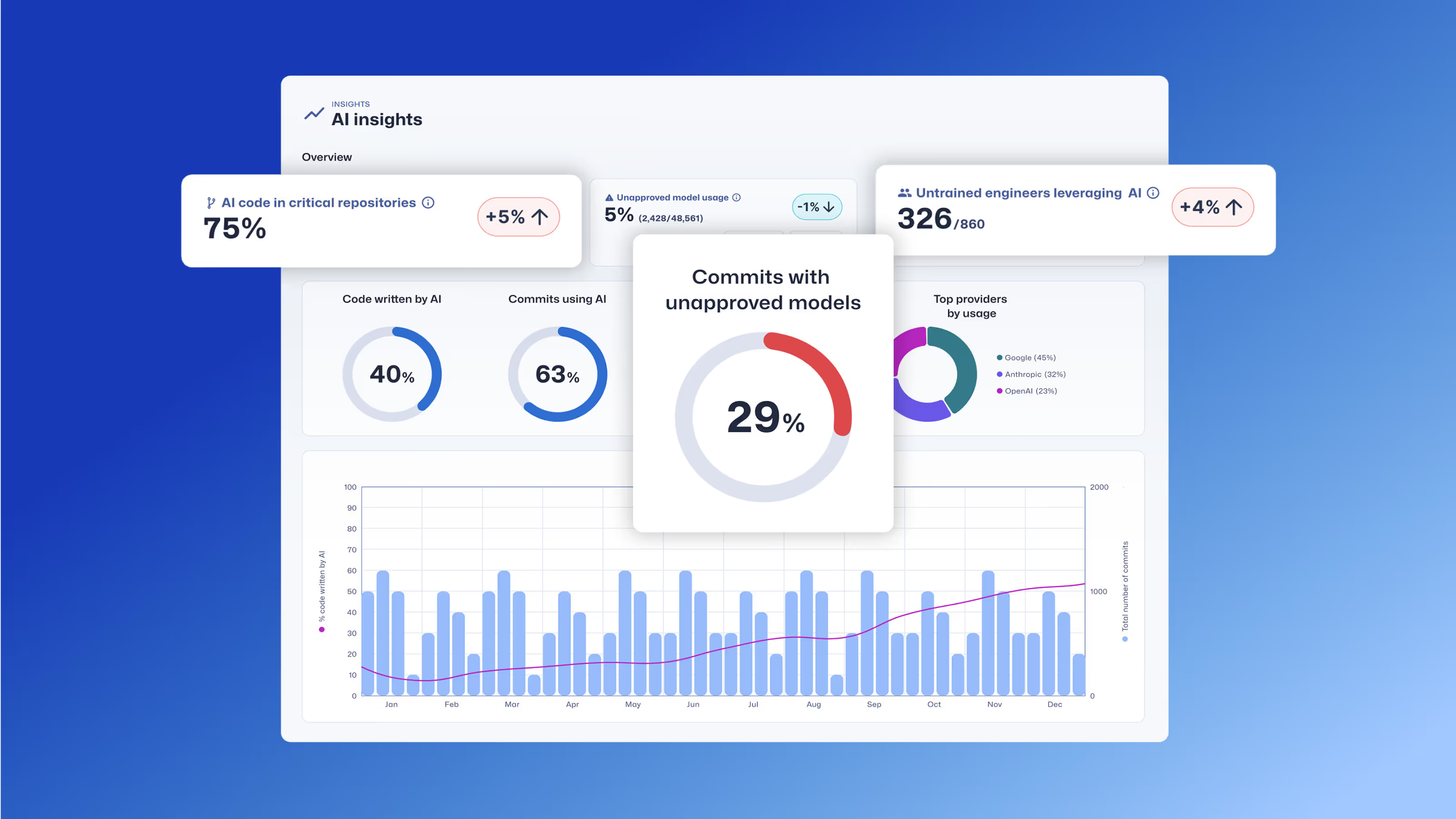

Our Solution – Trust Agent: AI

We believe you can have both speed and security. We're proud to launch Trust Agent: AI, a powerful new capability of our Trust Agent product that provides the deep observability and control you need to confidently embrace AI in your software development lifecycle. Using a unique combination of signals, Trust Agent: AI provides:

- Visibility: See which developers are using which AI coding tools, LLMs and MCPs, and on what codebases. No more "shadow AI."

- Risk Metrics: Connect AI-generated code to a developer's skill level and introduced vulnerabilities to understand the true risk being introduced, at the commit level.

- Governance: Automate policy enforcement to ensure AI-enabled developers meet secure coding standards.

The Trust Agent: AI dashboard provides insights into AI coding tool usage, contributing developer, code repository, and more.

The Developer's Role: The Last Line of Defense

While Trust Agent: AI gives you unprecedented governance, we know that the developer remains the last, and most critical, line of defense. The most effective way to manage AI-generated code risk is to ensure your developers have the security skills to review, validate, and secure that code. This is where SCW Learning, part of our comprehensive Developer Risk Management platform, comes in. With SCW Learning, we equip your developers with the hands-on skills they need to safely leverage AI for productivity. The SCW Learning product includes:

- SCW Trust Score: An industry-first benchmark that quantifies developer security proficiency, enabling you to identify which developers are best equipped to handle AI-generated code.

- AI Challenges: Interactive, real-world coding challenges that specifically teach developers how to find and fix vulnerabilities in AI-generated code.

- Targeted Learning: Curated learning paths from 200+ AI Challenges, Guidelines, Walkthroughs, Missions, Quests, and Courses that reinforce secure coding principles and help developers master the security skills needed to mitigate AI risks.

By combining the powerful governance of Trust Agent: AI with the skill development of our learning product, you can create a truly secure SDLC. You’ll be able to identify risk, enforce policy, and empower your developers to build code faster and more securely than ever before.

Ready to Secure Your AI Journey?

The era of AI is here, and as a product manager, my goal is to build solutions that don't just solve today's problems but anticipate tomorrow's. Trust Agent: AI is designed to do just that, giving you the visibility and control to manage AI risks while empowering your teams to innovate. The early access beta is now live, and we'd love for you to be a part of it. This isn't just about a new product; it's about pioneering a new standard for secure software development in an AI-first world.

Join the Early Access Waitlist today!

Learn how Trust Agent: AI provides deep visibility and governance over AI-generated code, empowering organizations to innovate faster and more securely.

Secure Code Warrior est là pour aider votre organisation à sécuriser le code tout au long du cycle de développement logiciel et à créer une culture dans laquelle la cybersécurité est une priorité. Que vous soyez responsable de la sécurité des applications, développeur, responsable de la sécurité informatique ou toute autre personne impliquée dans la sécurité, nous pouvons aider votre organisation à réduire les risques associés à un code non sécurisé.

Réservez une démoTamim Noorzad, directeur de la gestion des produits chez Secure Code Warrior, est un ingénieur devenu chef de produit avec plus de 17 ans d'expérience, spécialisé dans les produits SaaS 0 à 1.

The widespread adoption of AI coding tools is transforming software development. With 78% of developers1 now using AI to increase productivity, the speed of innovation has never been greater. But this rapid acceleration comes with a critical risk.

Studies reveal that as much as 50% of functionally correct, AI-generated code is insecure2. This isn’t a small bug; it’s a systemic challenge. It means every time a developer uses a tool like GitHub Copilot or ChatGPT, they could be unknowingly introducing new vulnerabilities into your codebase. The result is a dangerous mix of speed and security risks that most organizations are not equipped to manage.

The Challenge of “Shadow AI”

Without a way to manage AI coding tool usage, CISO, AppSec, and engineering leaders are exposed to new risks they can't see or measure. How can you answer crucial questions like:

- What percentage of our code is AI-generated?

- What MCPs are being used?

- What unapproved models are being used?

- What vulnerabilities are being generated by different models?

The lack of visibility and governance creates a new layer of risk and uncertainty. It’s the very definition of “shadow IT,” but for your codebase.

Our Solution – Trust Agent: AI

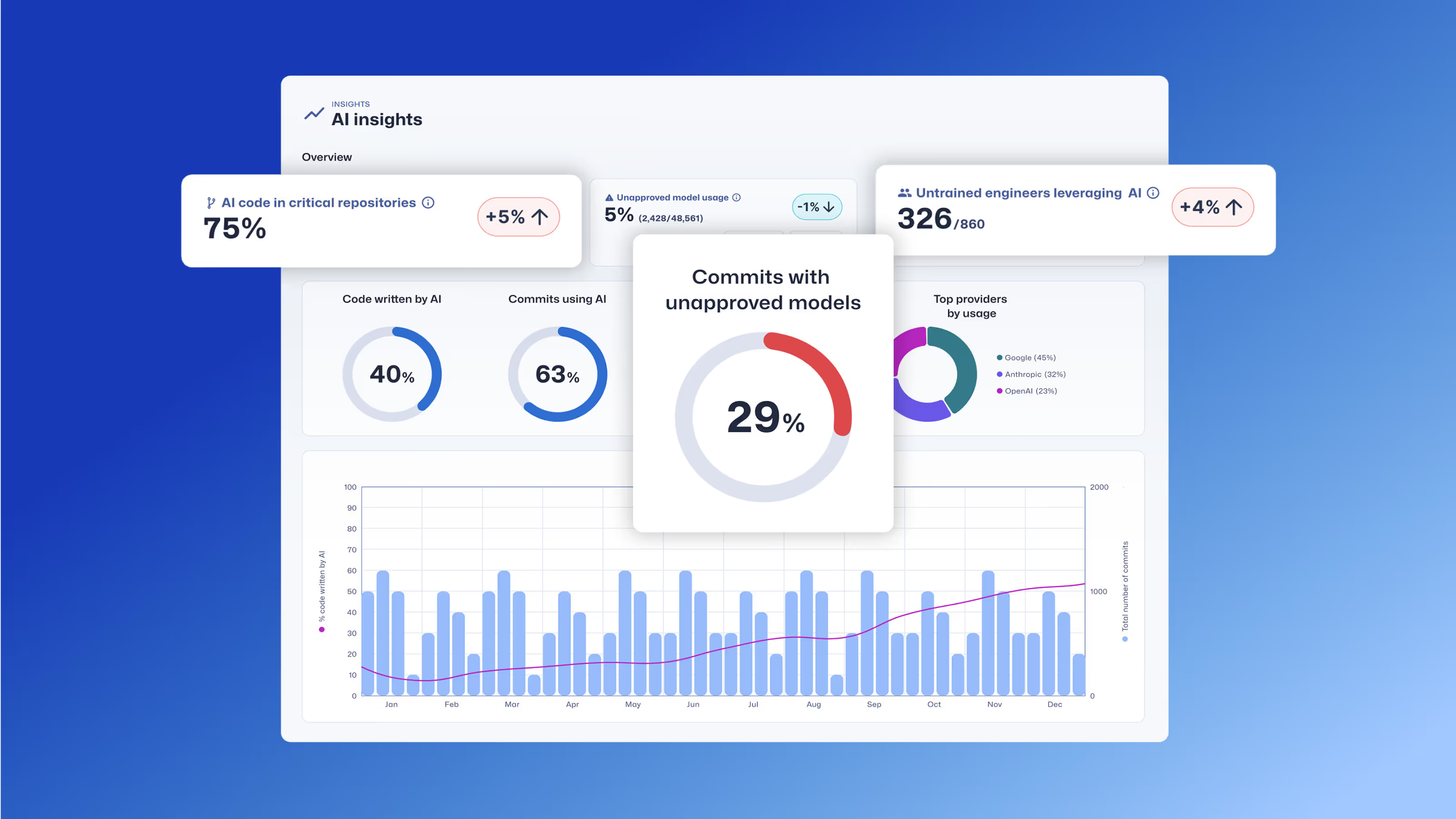

We believe you can have both speed and security. We're proud to launch Trust Agent: AI, a powerful new capability of our Trust Agent product that provides the deep observability and control you need to confidently embrace AI in your software development lifecycle. Using a unique combination of signals, Trust Agent: AI provides:

- Visibility: See which developers are using which AI coding tools, LLMs and MCPs, and on what codebases. No more "shadow AI."

- Risk Metrics: Connect AI-generated code to a developer's skill level and introduced vulnerabilities to understand the true risk being introduced, at the commit level.

- Governance: Automate policy enforcement to ensure AI-enabled developers meet secure coding standards.

The Trust Agent: AI dashboard provides insights into AI coding tool usage, contributing developer, code repository, and more.

The Developer's Role: The Last Line of Defense

While Trust Agent: AI gives you unprecedented governance, we know that the developer remains the last, and most critical, line of defense. The most effective way to manage AI-generated code risk is to ensure your developers have the security skills to review, validate, and secure that code. This is where SCW Learning, part of our comprehensive Developer Risk Management platform, comes in. With SCW Learning, we equip your developers with the hands-on skills they need to safely leverage AI for productivity. The SCW Learning product includes:

- SCW Trust Score: An industry-first benchmark that quantifies developer security proficiency, enabling you to identify which developers are best equipped to handle AI-generated code.

- AI Challenges: Interactive, real-world coding challenges that specifically teach developers how to find and fix vulnerabilities in AI-generated code.

- Targeted Learning: Curated learning paths from 200+ AI Challenges, Guidelines, Walkthroughs, Missions, Quests, and Courses that reinforce secure coding principles and help developers master the security skills needed to mitigate AI risks.

By combining the powerful governance of Trust Agent: AI with the skill development of our learning product, you can create a truly secure SDLC. You’ll be able to identify risk, enforce policy, and empower your developers to build code faster and more securely than ever before.

Ready to Secure Your AI Journey?

The era of AI is here, and as a product manager, my goal is to build solutions that don't just solve today's problems but anticipate tomorrow's. Trust Agent: AI is designed to do just that, giving you the visibility and control to manage AI risks while empowering your teams to innovate. The early access beta is now live, and we'd love for you to be a part of it. This isn't just about a new product; it's about pioneering a new standard for secure software development in an AI-first world.

Join the Early Access Waitlist today!

The widespread adoption of AI coding tools is transforming software development. With 78% of developers1 now using AI to increase productivity, the speed of innovation has never been greater. But this rapid acceleration comes with a critical risk.

Studies reveal that as much as 50% of functionally correct, AI-generated code is insecure2. This isn’t a small bug; it’s a systemic challenge. It means every time a developer uses a tool like GitHub Copilot or ChatGPT, they could be unknowingly introducing new vulnerabilities into your codebase. The result is a dangerous mix of speed and security risks that most organizations are not equipped to manage.

The Challenge of “Shadow AI”

Without a way to manage AI coding tool usage, CISO, AppSec, and engineering leaders are exposed to new risks they can't see or measure. How can you answer crucial questions like:

- What percentage of our code is AI-generated?

- What MCPs are being used?

- What unapproved models are being used?

- What vulnerabilities are being generated by different models?

The lack of visibility and governance creates a new layer of risk and uncertainty. It’s the very definition of “shadow IT,” but for your codebase.

Our Solution – Trust Agent: AI

We believe you can have both speed and security. We're proud to launch Trust Agent: AI, a powerful new capability of our Trust Agent product that provides the deep observability and control you need to confidently embrace AI in your software development lifecycle. Using a unique combination of signals, Trust Agent: AI provides:

- Visibility: See which developers are using which AI coding tools, LLMs and MCPs, and on what codebases. No more "shadow AI."

- Risk Metrics: Connect AI-generated code to a developer's skill level and introduced vulnerabilities to understand the true risk being introduced, at the commit level.

- Governance: Automate policy enforcement to ensure AI-enabled developers meet secure coding standards.

The Trust Agent: AI dashboard provides insights into AI coding tool usage, contributing developer, code repository, and more.

The Developer's Role: The Last Line of Defense

While Trust Agent: AI gives you unprecedented governance, we know that the developer remains the last, and most critical, line of defense. The most effective way to manage AI-generated code risk is to ensure your developers have the security skills to review, validate, and secure that code. This is where SCW Learning, part of our comprehensive Developer Risk Management platform, comes in. With SCW Learning, we equip your developers with the hands-on skills they need to safely leverage AI for productivity. The SCW Learning product includes:

- SCW Trust Score: An industry-first benchmark that quantifies developer security proficiency, enabling you to identify which developers are best equipped to handle AI-generated code.

- AI Challenges: Interactive, real-world coding challenges that specifically teach developers how to find and fix vulnerabilities in AI-generated code.

- Targeted Learning: Curated learning paths from 200+ AI Challenges, Guidelines, Walkthroughs, Missions, Quests, and Courses that reinforce secure coding principles and help developers master the security skills needed to mitigate AI risks.

By combining the powerful governance of Trust Agent: AI with the skill development of our learning product, you can create a truly secure SDLC. You’ll be able to identify risk, enforce policy, and empower your developers to build code faster and more securely than ever before.

Ready to Secure Your AI Journey?

The era of AI is here, and as a product manager, my goal is to build solutions that don't just solve today's problems but anticipate tomorrow's. Trust Agent: AI is designed to do just that, giving you the visibility and control to manage AI risks while empowering your teams to innovate. The early access beta is now live, and we'd love for you to be a part of it. This isn't just about a new product; it's about pioneering a new standard for secure software development in an AI-first world.

Join the Early Access Waitlist today!

Cliquez sur le lien ci-dessous et téléchargez le PDF de cette ressource.

Secure Code Warrior est là pour aider votre organisation à sécuriser le code tout au long du cycle de développement logiciel et à créer une culture dans laquelle la cybersécurité est une priorité. Que vous soyez responsable de la sécurité des applications, développeur, responsable de la sécurité informatique ou toute autre personne impliquée dans la sécurité, nous pouvons aider votre organisation à réduire les risques associés à un code non sécurisé.

Afficher le rapportRéservez une démoTamim Noorzad, directeur de la gestion des produits chez Secure Code Warrior, est un ingénieur devenu chef de produit avec plus de 17 ans d'expérience, spécialisé dans les produits SaaS 0 à 1.

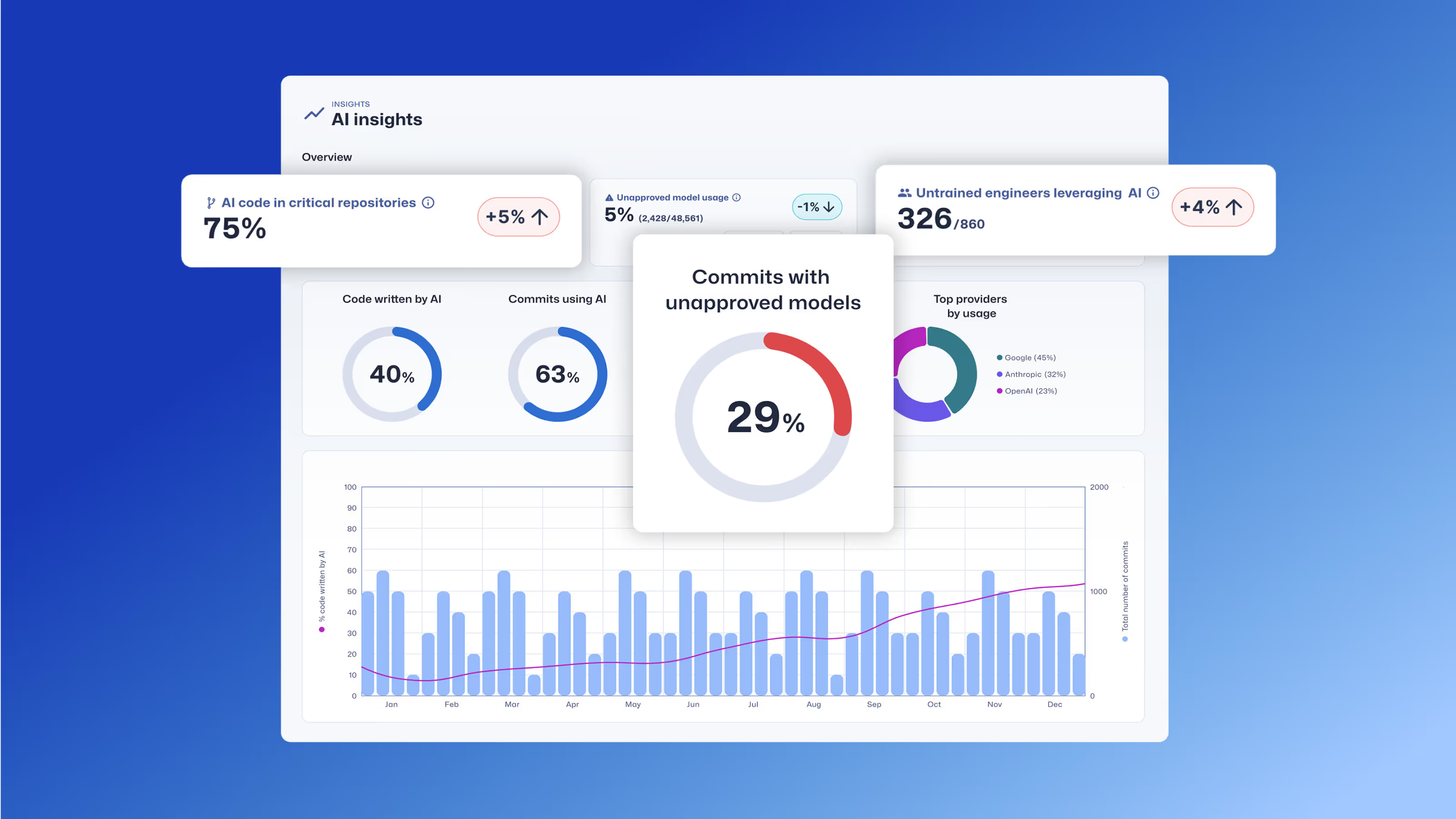

The widespread adoption of AI coding tools is transforming software development. With 78% of developers1 now using AI to increase productivity, the speed of innovation has never been greater. But this rapid acceleration comes with a critical risk.

Studies reveal that as much as 50% of functionally correct, AI-generated code is insecure2. This isn’t a small bug; it’s a systemic challenge. It means every time a developer uses a tool like GitHub Copilot or ChatGPT, they could be unknowingly introducing new vulnerabilities into your codebase. The result is a dangerous mix of speed and security risks that most organizations are not equipped to manage.

The Challenge of “Shadow AI”

Without a way to manage AI coding tool usage, CISO, AppSec, and engineering leaders are exposed to new risks they can't see or measure. How can you answer crucial questions like:

- What percentage of our code is AI-generated?

- What MCPs are being used?

- What unapproved models are being used?

- What vulnerabilities are being generated by different models?

The lack of visibility and governance creates a new layer of risk and uncertainty. It’s the very definition of “shadow IT,” but for your codebase.

Our Solution – Trust Agent: AI

We believe you can have both speed and security. We're proud to launch Trust Agent: AI, a powerful new capability of our Trust Agent product that provides the deep observability and control you need to confidently embrace AI in your software development lifecycle. Using a unique combination of signals, Trust Agent: AI provides:

- Visibility: See which developers are using which AI coding tools, LLMs and MCPs, and on what codebases. No more "shadow AI."

- Risk Metrics: Connect AI-generated code to a developer's skill level and introduced vulnerabilities to understand the true risk being introduced, at the commit level.

- Governance: Automate policy enforcement to ensure AI-enabled developers meet secure coding standards.

The Trust Agent: AI dashboard provides insights into AI coding tool usage, contributing developer, code repository, and more.

The Developer's Role: The Last Line of Defense

While Trust Agent: AI gives you unprecedented governance, we know that the developer remains the last, and most critical, line of defense. The most effective way to manage AI-generated code risk is to ensure your developers have the security skills to review, validate, and secure that code. This is where SCW Learning, part of our comprehensive Developer Risk Management platform, comes in. With SCW Learning, we equip your developers with the hands-on skills they need to safely leverage AI for productivity. The SCW Learning product includes:

- SCW Trust Score: An industry-first benchmark that quantifies developer security proficiency, enabling you to identify which developers are best equipped to handle AI-generated code.

- AI Challenges: Interactive, real-world coding challenges that specifically teach developers how to find and fix vulnerabilities in AI-generated code.

- Targeted Learning: Curated learning paths from 200+ AI Challenges, Guidelines, Walkthroughs, Missions, Quests, and Courses that reinforce secure coding principles and help developers master the security skills needed to mitigate AI risks.

By combining the powerful governance of Trust Agent: AI with the skill development of our learning product, you can create a truly secure SDLC. You’ll be able to identify risk, enforce policy, and empower your developers to build code faster and more securely than ever before.

Ready to Secure Your AI Journey?

The era of AI is here, and as a product manager, my goal is to build solutions that don't just solve today's problems but anticipate tomorrow's. Trust Agent: AI is designed to do just that, giving you the visibility and control to manage AI risks while empowering your teams to innovate. The early access beta is now live, and we'd love for you to be a part of it. This isn't just about a new product; it's about pioneering a new standard for secure software development in an AI-first world.

Join the Early Access Waitlist today!

Table des matières

Secure Code Warrior est là pour aider votre organisation à sécuriser le code tout au long du cycle de développement logiciel et à créer une culture dans laquelle la cybersécurité est une priorité. Que vous soyez responsable de la sécurité des applications, développeur, responsable de la sécurité informatique ou toute autre personne impliquée dans la sécurité, nous pouvons aider votre organisation à réduire les risques associés à un code non sécurisé.

Réservez une démoTéléchargerRessources pour vous aider à démarrer

Sujets et contenus de formation sur le code sécurisé

Notre contenu de pointe évolue constamment pour s'adapter à l'évolution constante du paysage du développement de logiciels tout en tenant compte de votre rôle. Des sujets couvrant tout, de l'IA à l'injection XQuery, proposés pour une variété de postes, allant des architectes aux ingénieurs en passant par les chefs de produit et l'assurance qualité. Découvrez un aperçu de ce que notre catalogue de contenu a à offrir par sujet et par rôle.

Threat Modeling with AI: Turning Every Developer into a Threat Modeler

Walk away better equipped to help developers combine threat modeling ideas and techniques with the AI tools they're already using to strengthen security, improve collaboration, and build more resilient software from the start.

Ressources pour vous aider à démarrer

Cybermon est de retour : les missions d'IA Beat the Boss sont désormais disponibles à la demande

Cybermon 2025 Beat the Boss est désormais disponible toute l'année dans SCW. Déployez des défis de sécurité avancés liés à l'IA et au LLM pour renforcer le développement sécurisé de l'IA à grande échelle.

Explication de la loi sur la cyberrésilience : ce que cela signifie pour le développement de logiciels sécurisés dès la conception

Découvrez ce que la loi européenne sur la cyberrésilience (CRA) exige, à qui elle s'applique et comment les équipes d'ingénieurs peuvent se préparer grâce à des pratiques de sécurité dès la conception, à la prévention des vulnérabilités et au renforcement des capacités des développeurs.

Facilitateur 1 : Critères de réussite définis et mesurables

Enabler 1 donne le coup d'envoi de notre série en 10 parties intitulée Enablers of Success en montrant comment associer le codage sécurisé à des résultats commerciaux tels que la réduction des risques et la rapidité pour assurer la maturité à long terme des programmes.

.avif)

%20(1).avif)

.avif)