SCW Trust Agent: AI - Visibility and Governance for Your AI-Assisted SDLC

The widespread adoption of AI coding tools is transforming software development. With 78% of developers1 now using AI to increase productivity, the speed of innovation has never been greater. But this rapid acceleration comes with a critical risk.

Studies reveal that as much as 50% of functionally correct, AI-generated code is insecure2. This isn’t a small bug; it’s a systemic challenge. It means every time a developer uses a tool like GitHub Copilot or ChatGPT, they could be unknowingly introducing new vulnerabilities into your codebase. The result is a dangerous mix of speed and security risks that most organizations are not equipped to manage.

The Challenge of “Shadow AI”

Without a way to manage AI coding tool usage, CISO, AppSec, and engineering leaders are exposed to new risks they can't see or measure. How can you answer crucial questions like:

- What percentage of our code is AI-generated?

- What MCPs are being used?

- What unapproved models are being used?

- What vulnerabilities are being generated by different models?

The lack of visibility and governance creates a new layer of risk and uncertainty. It’s the very definition of “shadow IT,” but for your codebase.

Our Solution – Trust Agent: AI

We believe you can have both speed and security. We're proud to launch Trust Agent: AI, a powerful new capability of our Trust Agent product that provides the deep observability and control you need to confidently embrace AI in your software development lifecycle. Using a unique combination of signals, Trust Agent: AI provides:

- Visibility: See which developers are using which AI coding tools, LLMs and MCPs, and on what codebases. No more "shadow AI."

- Risk Metrics: Connect AI-generated code to a developer's skill level and introduced vulnerabilities to understand the true risk being introduced, at the commit level.

- Governance: Automate policy enforcement to ensure AI-enabled developers meet secure coding standards.

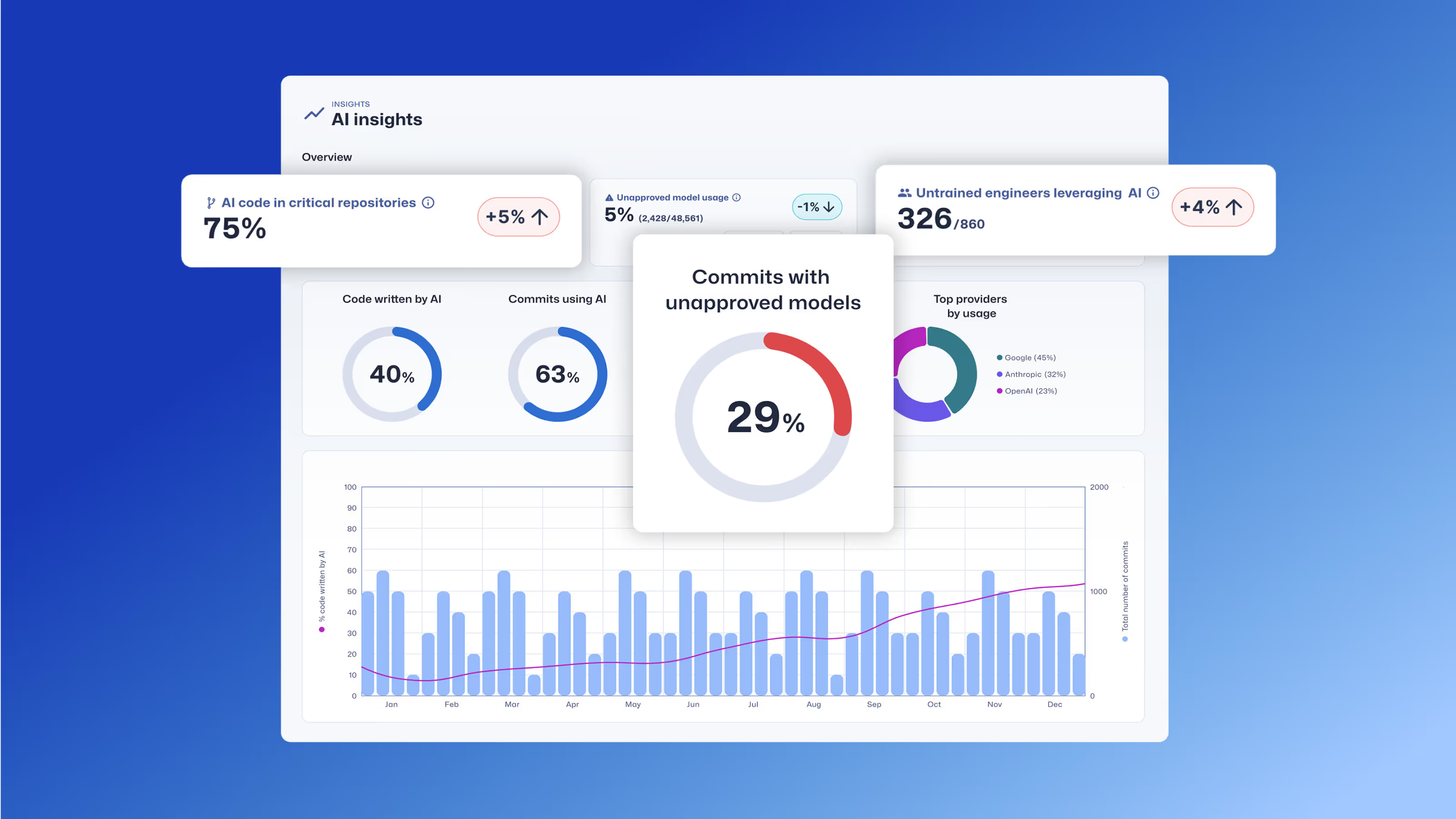

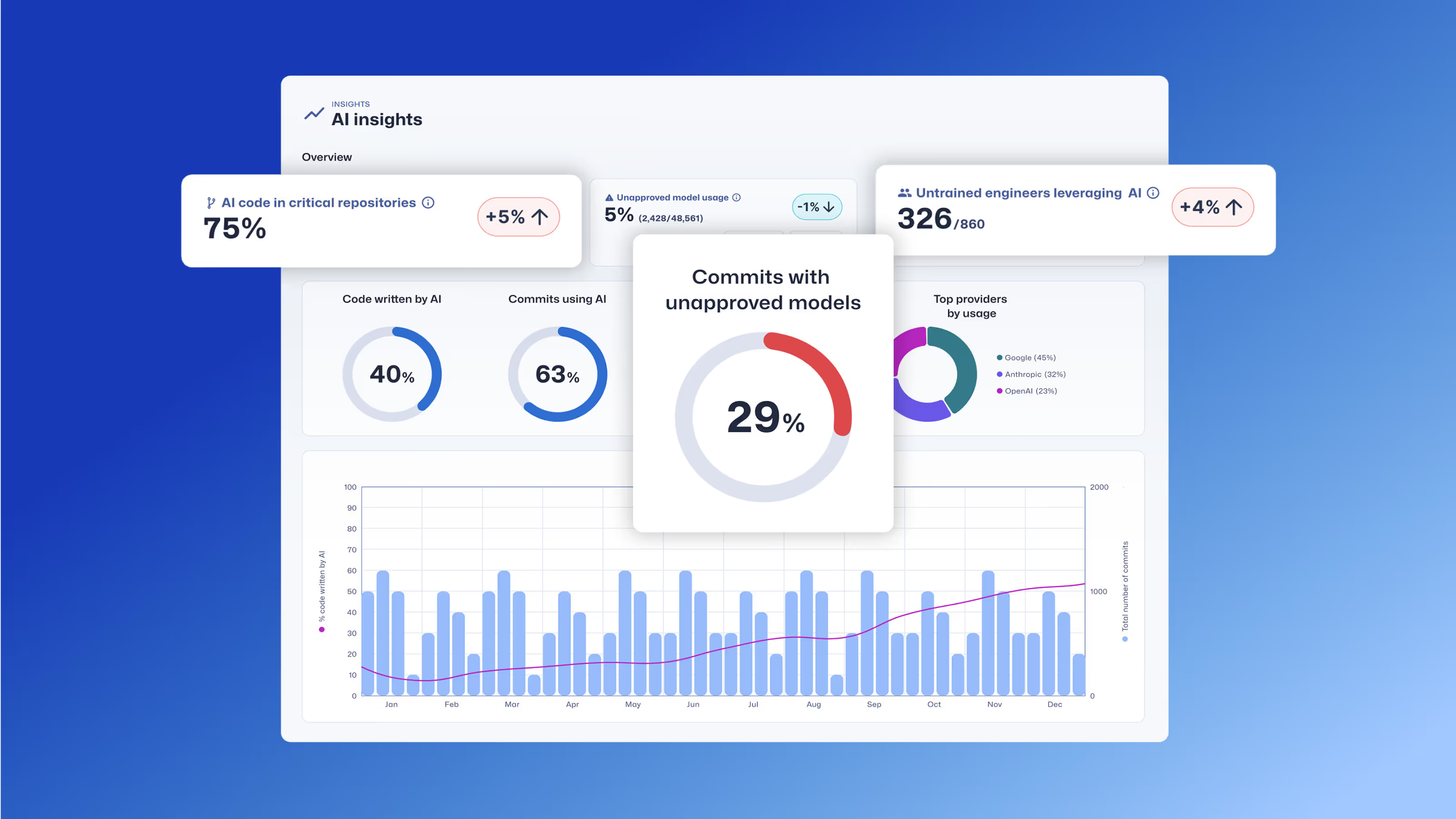

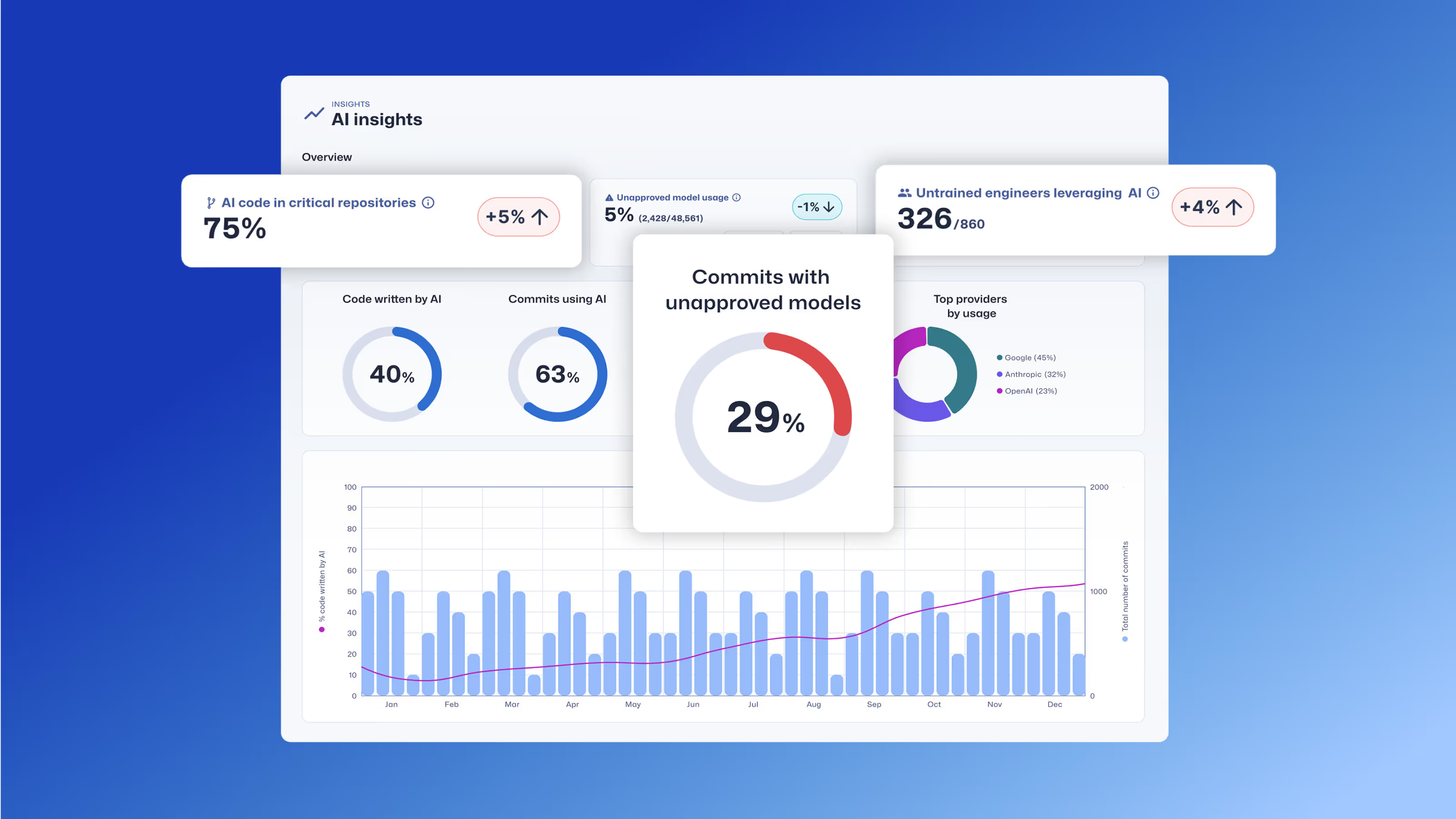

The Trust Agent: AI dashboard provides insights into AI coding tool usage, contributing developer, code repository, and more.

The Developer's Role: The Last Line of Defense

While Trust Agent: AI gives you unprecedented governance, we know that the developer remains the last, and most critical, line of defense. The most effective way to manage AI-generated code risk is to ensure your developers have the security skills to review, validate, and secure that code. This is where SCW Learning, part of our comprehensive Developer Risk Management platform, comes in. With SCW Learning, we equip your developers with the hands-on skills they need to safely leverage AI for productivity. The SCW Learning product includes:

- SCW Trust Score: An industry-first benchmark that quantifies developer security proficiency, enabling you to identify which developers are best equipped to handle AI-generated code.

- AI Challenges: Interactive, real-world coding challenges that specifically teach developers how to find and fix vulnerabilities in AI-generated code.

- Targeted Learning: Curated learning paths from 200+ AI Challenges, Guidelines, Walkthroughs, Missions, Quests, and Courses that reinforce secure coding principles and help developers master the security skills needed to mitigate AI risks.

By combining the powerful governance of Trust Agent: AI with the skill development of our learning product, you can create a truly secure SDLC. You’ll be able to identify risk, enforce policy, and empower your developers to build code faster and more securely than ever before.

Ready to Secure Your AI Journey?

The era of AI is here, and as a product manager, my goal is to build solutions that don't just solve today's problems but anticipate tomorrow's. Trust Agent: AI is designed to do just that, giving you the visibility and control to manage AI risks while empowering your teams to innovate. The early access beta is now live, and we'd love for you to be a part of it. This isn't just about a new product; it's about pioneering a new standard for secure software development in an AI-first world.

Join the Early Access Waitlist today!

Learn how Trust Agent: AI provides deep visibility and governance over AI-generated code, empowering organizations to innovate faster and more securely.

Secure Code Warrior está aquí para que su organización le ayude a proteger el código durante todo el ciclo de vida del desarrollo de software y a crear una cultura en la que la ciberseguridad sea una prioridad. Ya sea administrador de AppSec, desarrollador, CISO o cualquier persona relacionada con la seguridad, podemos ayudar a su organización a reducir los riesgos asociados con el código inseguro.

Reserva una demostraciónTamim Noorzad, a Director of Product Management at Secure Code Warrior, is an engineer turned product manager with over 17 years of experience, specializing in SaaS 0-to-1 products.

The widespread adoption of AI coding tools is transforming software development. With 78% of developers1 now using AI to increase productivity, the speed of innovation has never been greater. But this rapid acceleration comes with a critical risk.

Studies reveal that as much as 50% of functionally correct, AI-generated code is insecure2. This isn’t a small bug; it’s a systemic challenge. It means every time a developer uses a tool like GitHub Copilot or ChatGPT, they could be unknowingly introducing new vulnerabilities into your codebase. The result is a dangerous mix of speed and security risks that most organizations are not equipped to manage.

The Challenge of “Shadow AI”

Without a way to manage AI coding tool usage, CISO, AppSec, and engineering leaders are exposed to new risks they can't see or measure. How can you answer crucial questions like:

- What percentage of our code is AI-generated?

- What MCPs are being used?

- What unapproved models are being used?

- What vulnerabilities are being generated by different models?

The lack of visibility and governance creates a new layer of risk and uncertainty. It’s the very definition of “shadow IT,” but for your codebase.

Our Solution – Trust Agent: AI

We believe you can have both speed and security. We're proud to launch Trust Agent: AI, a powerful new capability of our Trust Agent product that provides the deep observability and control you need to confidently embrace AI in your software development lifecycle. Using a unique combination of signals, Trust Agent: AI provides:

- Visibility: See which developers are using which AI coding tools, LLMs and MCPs, and on what codebases. No more "shadow AI."

- Risk Metrics: Connect AI-generated code to a developer's skill level and introduced vulnerabilities to understand the true risk being introduced, at the commit level.

- Governance: Automate policy enforcement to ensure AI-enabled developers meet secure coding standards.

The Trust Agent: AI dashboard provides insights into AI coding tool usage, contributing developer, code repository, and more.

The Developer's Role: The Last Line of Defense

While Trust Agent: AI gives you unprecedented governance, we know that the developer remains the last, and most critical, line of defense. The most effective way to manage AI-generated code risk is to ensure your developers have the security skills to review, validate, and secure that code. This is where SCW Learning, part of our comprehensive Developer Risk Management platform, comes in. With SCW Learning, we equip your developers with the hands-on skills they need to safely leverage AI for productivity. The SCW Learning product includes:

- SCW Trust Score: An industry-first benchmark that quantifies developer security proficiency, enabling you to identify which developers are best equipped to handle AI-generated code.

- AI Challenges: Interactive, real-world coding challenges that specifically teach developers how to find and fix vulnerabilities in AI-generated code.

- Targeted Learning: Curated learning paths from 200+ AI Challenges, Guidelines, Walkthroughs, Missions, Quests, and Courses that reinforce secure coding principles and help developers master the security skills needed to mitigate AI risks.

By combining the powerful governance of Trust Agent: AI with the skill development of our learning product, you can create a truly secure SDLC. You’ll be able to identify risk, enforce policy, and empower your developers to build code faster and more securely than ever before.

Ready to Secure Your AI Journey?

The era of AI is here, and as a product manager, my goal is to build solutions that don't just solve today's problems but anticipate tomorrow's. Trust Agent: AI is designed to do just that, giving you the visibility and control to manage AI risks while empowering your teams to innovate. The early access beta is now live, and we'd love for you to be a part of it. This isn't just about a new product; it's about pioneering a new standard for secure software development in an AI-first world.

Join the Early Access Waitlist today!

The widespread adoption of AI coding tools is transforming software development. With 78% of developers1 now using AI to increase productivity, the speed of innovation has never been greater. But this rapid acceleration comes with a critical risk.

Studies reveal that as much as 50% of functionally correct, AI-generated code is insecure2. This isn’t a small bug; it’s a systemic challenge. It means every time a developer uses a tool like GitHub Copilot or ChatGPT, they could be unknowingly introducing new vulnerabilities into your codebase. The result is a dangerous mix of speed and security risks that most organizations are not equipped to manage.

The Challenge of “Shadow AI”

Without a way to manage AI coding tool usage, CISO, AppSec, and engineering leaders are exposed to new risks they can't see or measure. How can you answer crucial questions like:

- What percentage of our code is AI-generated?

- What MCPs are being used?

- What unapproved models are being used?

- What vulnerabilities are being generated by different models?

The lack of visibility and governance creates a new layer of risk and uncertainty. It’s the very definition of “shadow IT,” but for your codebase.

Our Solution – Trust Agent: AI

We believe you can have both speed and security. We're proud to launch Trust Agent: AI, a powerful new capability of our Trust Agent product that provides the deep observability and control you need to confidently embrace AI in your software development lifecycle. Using a unique combination of signals, Trust Agent: AI provides:

- Visibility: See which developers are using which AI coding tools, LLMs and MCPs, and on what codebases. No more "shadow AI."

- Risk Metrics: Connect AI-generated code to a developer's skill level and introduced vulnerabilities to understand the true risk being introduced, at the commit level.

- Governance: Automate policy enforcement to ensure AI-enabled developers meet secure coding standards.

The Trust Agent: AI dashboard provides insights into AI coding tool usage, contributing developer, code repository, and more.

The Developer's Role: The Last Line of Defense

While Trust Agent: AI gives you unprecedented governance, we know that the developer remains the last, and most critical, line of defense. The most effective way to manage AI-generated code risk is to ensure your developers have the security skills to review, validate, and secure that code. This is where SCW Learning, part of our comprehensive Developer Risk Management platform, comes in. With SCW Learning, we equip your developers with the hands-on skills they need to safely leverage AI for productivity. The SCW Learning product includes:

- SCW Trust Score: An industry-first benchmark that quantifies developer security proficiency, enabling you to identify which developers are best equipped to handle AI-generated code.

- AI Challenges: Interactive, real-world coding challenges that specifically teach developers how to find and fix vulnerabilities in AI-generated code.

- Targeted Learning: Curated learning paths from 200+ AI Challenges, Guidelines, Walkthroughs, Missions, Quests, and Courses that reinforce secure coding principles and help developers master the security skills needed to mitigate AI risks.

By combining the powerful governance of Trust Agent: AI with the skill development of our learning product, you can create a truly secure SDLC. You’ll be able to identify risk, enforce policy, and empower your developers to build code faster and more securely than ever before.

Ready to Secure Your AI Journey?

The era of AI is here, and as a product manager, my goal is to build solutions that don't just solve today's problems but anticipate tomorrow's. Trust Agent: AI is designed to do just that, giving you the visibility and control to manage AI risks while empowering your teams to innovate. The early access beta is now live, and we'd love for you to be a part of it. This isn't just about a new product; it's about pioneering a new standard for secure software development in an AI-first world.

Join the Early Access Waitlist today!

Haga clic en el enlace de abajo y descargue el PDF de este recurso.

Secure Code Warrior está aquí para que su organización le ayude a proteger el código durante todo el ciclo de vida del desarrollo de software y a crear una cultura en la que la ciberseguridad sea una prioridad. Ya sea administrador de AppSec, desarrollador, CISO o cualquier persona relacionada con la seguridad, podemos ayudar a su organización a reducir los riesgos asociados con el código inseguro.

Ver informeReserva una demostraciónTamim Noorzad, a Director of Product Management at Secure Code Warrior, is an engineer turned product manager with over 17 years of experience, specializing in SaaS 0-to-1 products.

The widespread adoption of AI coding tools is transforming software development. With 78% of developers1 now using AI to increase productivity, the speed of innovation has never been greater. But this rapid acceleration comes with a critical risk.

Studies reveal that as much as 50% of functionally correct, AI-generated code is insecure2. This isn’t a small bug; it’s a systemic challenge. It means every time a developer uses a tool like GitHub Copilot or ChatGPT, they could be unknowingly introducing new vulnerabilities into your codebase. The result is a dangerous mix of speed and security risks that most organizations are not equipped to manage.

The Challenge of “Shadow AI”

Without a way to manage AI coding tool usage, CISO, AppSec, and engineering leaders are exposed to new risks they can't see or measure. How can you answer crucial questions like:

- What percentage of our code is AI-generated?

- What MCPs are being used?

- What unapproved models are being used?

- What vulnerabilities are being generated by different models?

The lack of visibility and governance creates a new layer of risk and uncertainty. It’s the very definition of “shadow IT,” but for your codebase.

Our Solution – Trust Agent: AI

We believe you can have both speed and security. We're proud to launch Trust Agent: AI, a powerful new capability of our Trust Agent product that provides the deep observability and control you need to confidently embrace AI in your software development lifecycle. Using a unique combination of signals, Trust Agent: AI provides:

- Visibility: See which developers are using which AI coding tools, LLMs and MCPs, and on what codebases. No more "shadow AI."

- Risk Metrics: Connect AI-generated code to a developer's skill level and introduced vulnerabilities to understand the true risk being introduced, at the commit level.

- Governance: Automate policy enforcement to ensure AI-enabled developers meet secure coding standards.

The Trust Agent: AI dashboard provides insights into AI coding tool usage, contributing developer, code repository, and more.

The Developer's Role: The Last Line of Defense

While Trust Agent: AI gives you unprecedented governance, we know that the developer remains the last, and most critical, line of defense. The most effective way to manage AI-generated code risk is to ensure your developers have the security skills to review, validate, and secure that code. This is where SCW Learning, part of our comprehensive Developer Risk Management platform, comes in. With SCW Learning, we equip your developers with the hands-on skills they need to safely leverage AI for productivity. The SCW Learning product includes:

- SCW Trust Score: An industry-first benchmark that quantifies developer security proficiency, enabling you to identify which developers are best equipped to handle AI-generated code.

- AI Challenges: Interactive, real-world coding challenges that specifically teach developers how to find and fix vulnerabilities in AI-generated code.

- Targeted Learning: Curated learning paths from 200+ AI Challenges, Guidelines, Walkthroughs, Missions, Quests, and Courses that reinforce secure coding principles and help developers master the security skills needed to mitigate AI risks.

By combining the powerful governance of Trust Agent: AI with the skill development of our learning product, you can create a truly secure SDLC. You’ll be able to identify risk, enforce policy, and empower your developers to build code faster and more securely than ever before.

Ready to Secure Your AI Journey?

The era of AI is here, and as a product manager, my goal is to build solutions that don't just solve today's problems but anticipate tomorrow's. Trust Agent: AI is designed to do just that, giving you the visibility and control to manage AI risks while empowering your teams to innovate. The early access beta is now live, and we'd love for you to be a part of it. This isn't just about a new product; it's about pioneering a new standard for secure software development in an AI-first world.

Join the Early Access Waitlist today!

Tabla de contenido

Secure Code Warrior está aquí para que su organización le ayude a proteger el código durante todo el ciclo de vida del desarrollo de software y a crear una cultura en la que la ciberseguridad sea una prioridad. Ya sea administrador de AppSec, desarrollador, CISO o cualquier persona relacionada con la seguridad, podemos ayudar a su organización a reducir los riesgos asociados con el código inseguro.

Reserva una demostraciónDescargarRecursos para empezar

Temas y contenido de formación sobre código seguro

Nuestro contenido líder en la industria siempre está evolucionando para adaptarse al cambiante panorama del desarrollo de software teniendo en cuenta su función. Se ofrecen temas que abarcan desde la IA hasta la inyección de XQuery para distintos puestos, desde arquitectos e ingenieros hasta directores de productos y control de calidad. Obtenga un adelanto de lo que ofrece nuestro catálogo de contenido por tema y función.

Threat Modeling with AI: Turning Every Developer into a Threat Modeler

Walk away better equipped to help developers combine threat modeling ideas and techniques with the AI tools they're already using to strengthen security, improve collaboration, and build more resilient software from the start.

Recursos para empezar

Cybermon está de vuelta: las misiones de IA de Beat the Boss ya están disponibles bajo demanda

Cybermon 2025 Beat the Boss ya está disponible durante todo el año en SCW. Implemente desafíos de seguridad avanzados de IA y LLM para fortalecer el desarrollo seguro de la IA a gran escala.

Explicación de la Ley de Ciberresiliencia: qué significa para el desarrollo de software seguro por diseño

Descubra qué exige la Ley de Ciberresiliencia (CRA) de la UE, a quién se aplica y cómo los equipos de ingeniería pueden prepararse con prácticas de diseño seguras, prevención de vulnerabilidades y desarrollo de capacidades para desarrolladores.

Habilitador 1: Criterios de éxito definidos y medibles

Enabler 1 da inicio a nuestra serie Enablers of Success, de 10 partes, mostrando cómo vincular la codificación segura con los resultados empresariales, como la reducción del riesgo y la velocidad para lograr la madurez del programa a largo plazo.

.avif)

%20(1).avif)

.avif)