Stop AI software risk before it starts

Ship secure, high-quality code at every commit – no matter who (or what) wrote it.

The control plane for AI-driven development

Make AI-driven development visible, secure, and resilient—preventing vulnerabilities before production so teams can move fast with confidence.

Enterprise governance at scale, AI development with confidence.

Establish policy, gain enterprise-wide visibility, and prevent uncontrolled AI-generated risk across the development lifecycle.

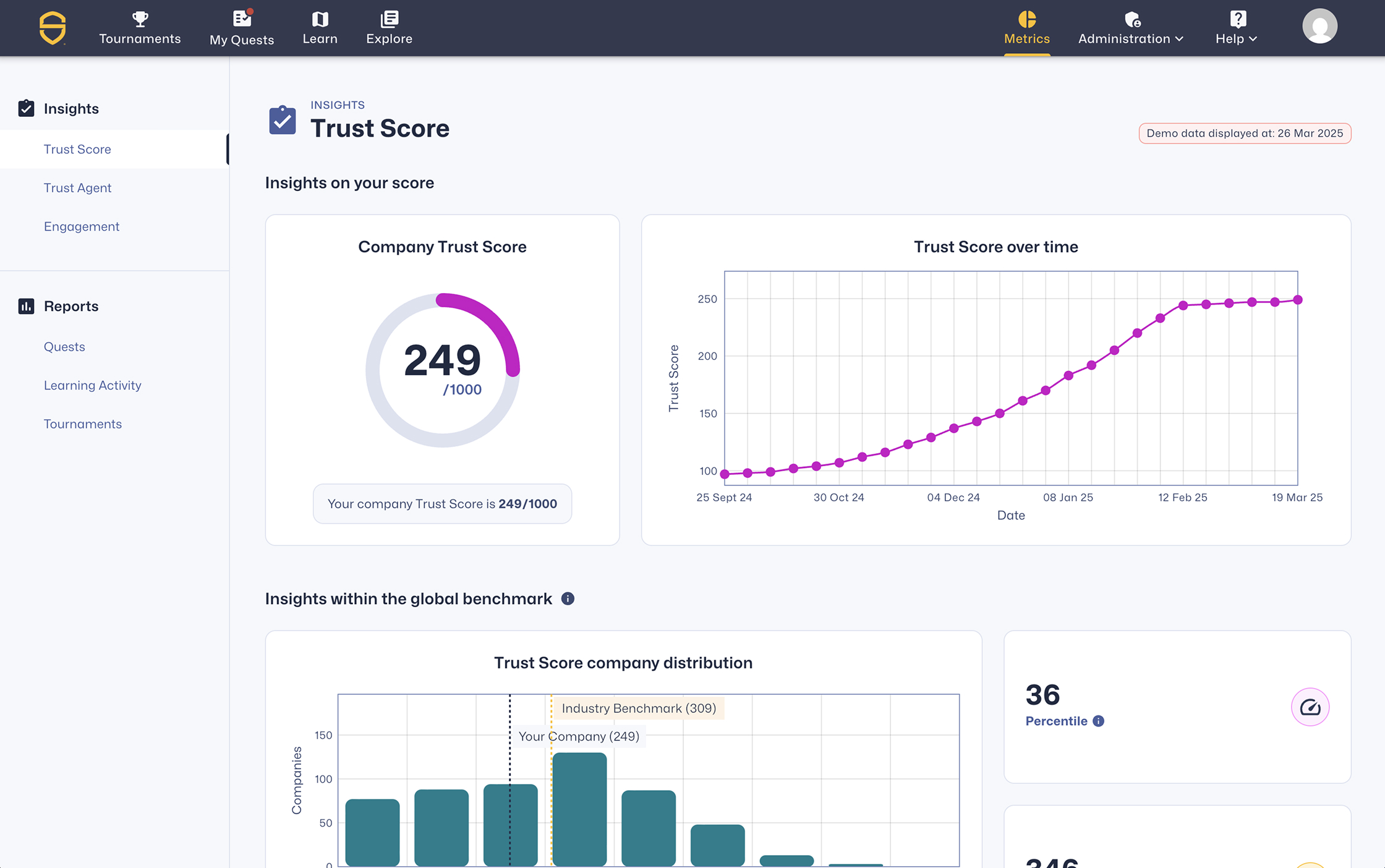

Gain visibility into how much code AI creates

- Define and enforce secure development policies across AI workflows

- Measure software risk and governance posture at the enterprise level

- Demonstrate control, compliance, and trust with audit-level reporting

Prevent AI-introduced vulnerabilities at commit

Make AI usage visible, enforce secure coding policy at commit, and stop introduced vulnerabilities across human and AI-assisted code. Apply your existing security standards to AI-driven development — without slowing delivery.

Reduce introduced vulnerabilities by 53%+

- Build secure coding capability across developers and AI-driven workflows

- Deliver precise, policy-aligned guidance directly into the tools where code is written

- Gain visibility into AI-generated code and its impact on software risk

Scale AI development without slowing down

Make AI-assisted development secure, efficient, and measurable — so teams ship faster without rework or review bottlenecks.

Reduce MTTR by up to 82%

- Drive measurable skill improvement with adaptive learning and hands-on labs

- Integrate secure coding expertise into the IDEs, repos, pipelines, and security tools you already use

- Access continuously updated learning content across 70+ languages, 600+ vulnerabilities, and 11,000+ activities

Secure and built for the tools you already use

Make AI-driven development risk visible

See how AI coding is used, the risk it creates, and the behavior behind it—so you can stop vulnerabilities before they ship.

Reduce vulnerabilities at the source

Hands-on secure coding and AI security learning delivered in real-world developer workflows — helping organizations reduce vulnerabilities by 53%+.

Enforce developer and AI policy control at scale

Enable and control your AI-driven software development lifecycle while preventing risk, enforcing policy, and proving trust before code reaches production.

Secure AI-driven development before it ships

See developer risk, enforce policy, and prevent vulnerabilities across your software development lifecycle.

Resources to get you started

Understand AI software governance and how to reduce AI-driven software risk

Learn what AI software governance is, why it matters, and how Secure Code Warrior helps organizations safely adopt AI-assisted development.

%20(1).avif)

.avif)