Commit-level enforcement for AI software governance

Trust Agent enforces AI software governance at the point of commit — correlating AI model usage, developer risk signals, and secure coding policies to prevent introduced vulnerabilities before code reaches production.

AI is writing code. Your security controls still lag behind.

AI-assisted development is now embedded across modern software delivery:

- AI coding assistants generating production-ready code

- Agent-based workflows operating beyond developer desktops

- Cloud-hosted coding bots contributing across repositories

- Rapid, multi-language commits at unprecedented velocity

Traditional training measures completion. Static scanners detect vulnerabilities after code is written. AI software governance requires enforceable control at commit—before risk reaches production.

The enforcement engine of AI software governance

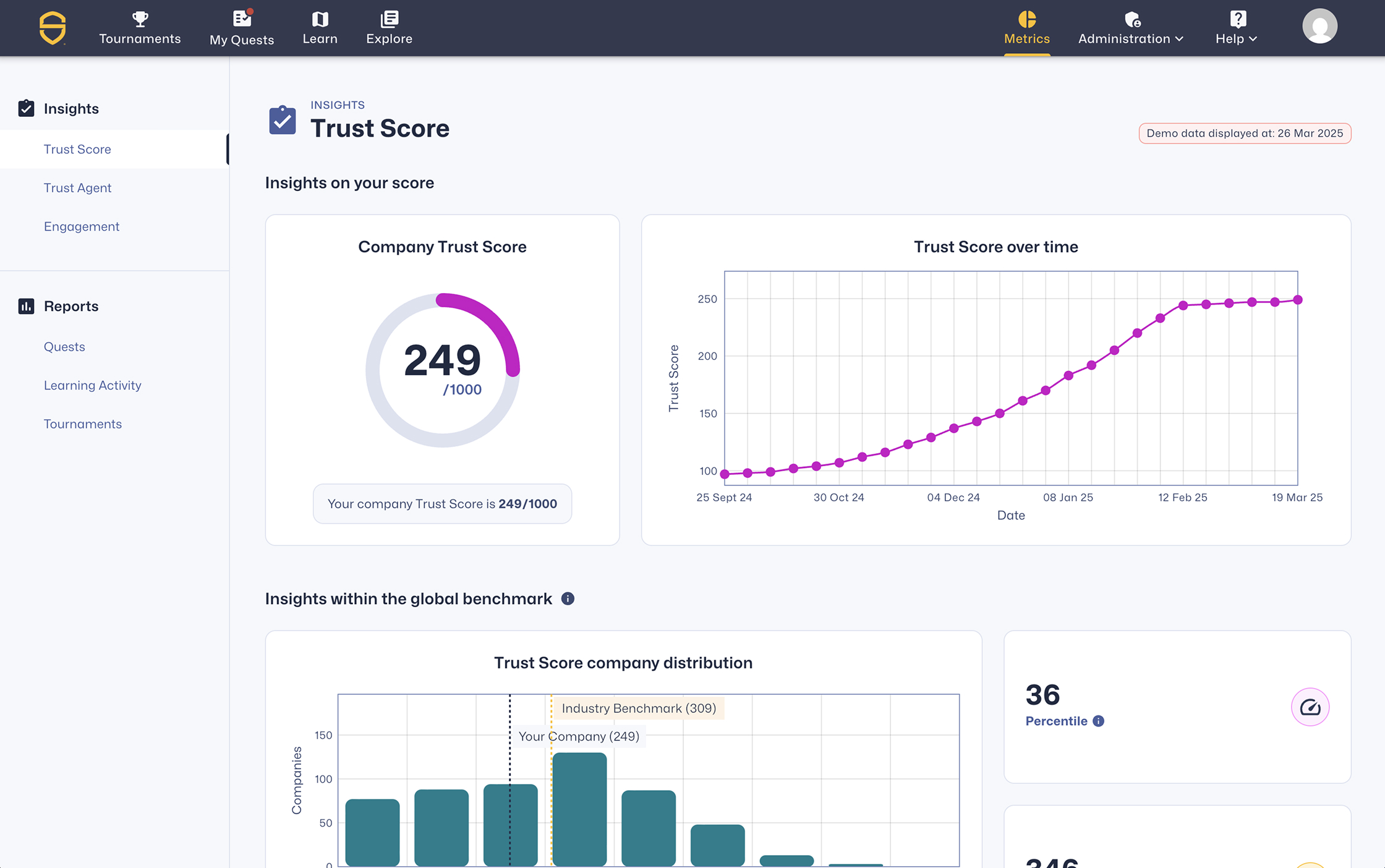

Trust Agent transforms visibility into control. It correlates commit metadata, AI model usage, MCP activity, and defined policy thresholds to enforce governance at commit — without slowing development velocity.

Prevent risk. Prove control. Ship faster.

Trust Agent reduces AI-introduced vulnerabilities, shortens remediation cycles, prioritizes high-risk commits, and strengthens developer accountability across AI-assisted development.

to remediate

traceability at commit

policy enforcement

Real-time enforcement at commit

Traditional application security tools detect vulnerabilities after code is written. Trust Agent enforces AI model restrictions and secure coding policies at commit — preventing introduced vulnerabilities before they enter production.

Developer discovery & intelligence

Continuously identify contributors, tooling usage, commit activity, and verified secure coding competency.

AI tool & model traceability

Maintain commit-level visibility into which AI tools, models, and agents contribute across repositories.

LLM security benchmarking

Apply Secure Code Warrior’s LLM security benchmark data to inform approved AI model and usage decisions.

Commit-level risk scoring & governance

Analyze AI-assisted commits and log, warn, or block non-compliant code at the point of commit.

Adaptive risk remediation

Trigger targeted learning from real commit behavior to close skill gaps and prevent recurring risk.

Govern AI-assisted development in five steps

Connect & Observe

Integrate with repositories and CI systems to capture commit metadata and AI model usage signals.

Trace AI Influence

Identify which tools and models contributed to specific commits across projects.

Audiences we serve

Lorem ipsum diam quis enim lobortis scelerisque fermentum dui faucibus in ornare quam viverra orci sagittis eu volutpat odio facilisis.

Govern AI-driven development before it ships

Trace AI influence. Correlate risk at commit. Enforce control across your software lifecycle.

Commit-level governance for AI-assisted development

Learn how Trust Agent provides commit-level visibility, developer trust scoring, and enforceable AI governance controls.